, the idea has circulated in the AI field that prompt engineering is dead, or at least obsolete. This, on one side because pure language models have become more flexible and robust, better tolerating ambiguity, and on the other hand because reasoning models can work around flawed prompts and thus better understand the user. Whatever the exact reason, the era of “magic phrases” that worked like incantations and hyper-specific wording hacks seems to be fading. In that narrow sense, prompt engineering as a bag of tricks (which has been analyzed scientifically in papers like this one by DeepMind, which unveiled supreme prompt seeds for language models back when GPT-4 was made available) really is kind of dying.

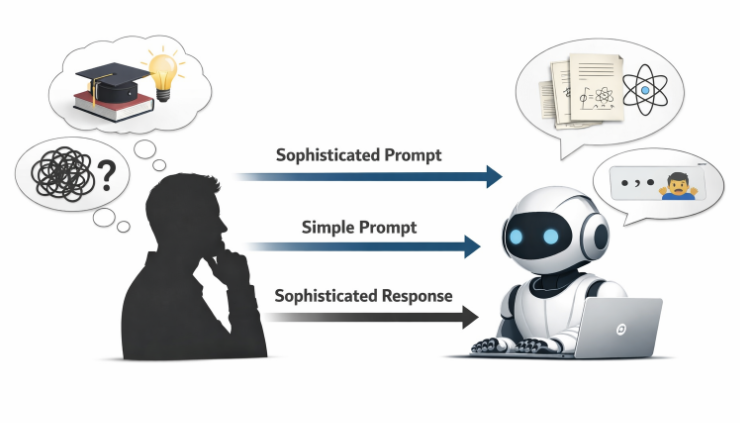

But Anthropic has now put numbers behind something subtler and more important. They found that while the exact wording of a prompt matters less than it used to, the “sophistication” behind the prompt matters enormously. In fact, it correlates almost perfectly with the sophistication of the model’s response.

This is not a metaphor or a motivational “slogan”, but rather an empirical result obtained from data collected by Anthropic from its usage base. Read on to know more, because this is all super exciting, beyond the mere implications for how we use LLM-based AI systems.

Anthropic Economic Index: January 2026 Report

In the Anthropic Economic Index: January 2026 Report, lead authors Ruth Appel, Maxim Massenkoff, and Peter McCrory analyze how people actually use Claude across regions and contexts. To start with what’s probably the most striking finding, they observed a strong quantitative relationship between the level of education required to understand a user’s prompt and the level of education required to understand Claude’s response. Across countries, the correlation coefficient is r = 0.925 (p < 0.001, N = 117). Across U.S. states, it is r = 0.928 (p < 0.001, N = 50).

This means that the more learned you are, and the clearer prompts you can input, the better the answers. In plain terms, how humans prompt is how Claude responds.

And you know what? I have kind of seen this qualitatively myself when comparing how I and other PhD-level colleagues interact with AI systems vs. how under-instructed users do.

From “prompt hacks” to “cognitive scaffolding”

Early conversations about prompt engineering focused on surface-level techniques: adding “let’s think step by step”, specifying a role (“act as a senior data scientist”), or carefully ordering instructions (more examples of this in the DeepMind paper I linked in the introduction section). These techniques were useful when models were fragile and easily derailed — which, by the way, was in turn used to overwrite their safety rules, something much harder to achieve now.

But as models improved, many of these tricks became optional. The same model could often arrive at a reasonable answer even without them.

Anthropic’s findings clarify why this eventually led to the perception that prompt engineering was obsolete. It turns out that the “mechanical” aspects of prompting—syntax, magic words, formatting rituals—indeed matter less. What has not disappeared is the importance of what they call “cognitive scaffolding:” how well the user understands the problem, how precisely s/he frames it, and whether s/he knows what a good answer even looks like–in other words, critical thinking to tell good responses from useless hallucinations.

The study operationalizes this idea using education as a quantitative proxy for sophistication. The researchers estimate the number of years of education required to understand both prompts and responses, finding a near-one-to-one correlation! This suggests that Claude is not independently “upgrading” or “downgrading” the intellectual level of the interaction. Instead, it mirrors the user’s input remarkably closely. That’s definitely good when you know what you are asking, but makes the AI system underperform when you don’t know much about it yourself or when you perhaps type a request or question too quickly and without paying attention.

If a user provides a shallow, underspecified prompt, Claude tends to respond at a similarly shallow level. If the prompt encodes deep domain knowledge, well-thought constraints, and implicit standards of rigor, Claude responds in kind. And hell yes I’ve certainly seen this on ChatGPT and Gemini models, which are the ones I use most.

Why this is not trivial

At first glance, this may sound obvious. Of course better questions get better answers. But the magnitude of the correlation is what makes the result scientifically interesting. Correlations above 0.9 are rare in social and behavioral data, especially across heterogeneous units like countries or U.S. states. Thus, what the work found is not a weak tendency but a quite structural relationship.

Critically, the finding runs against the common notion that AI could work as an equalizer, by allowing everybody to retrieve information of similar level regardless of their language, level of education and acquaintance with a topic. There is a widespread hope that advanced models will “lift” low-skill users by automatically providing expert-level output regardless of input quality. The results obtained by Anthropic suggests that this isn’t the case at all, and a far more conditional reality. While Claude (and this very probably applies to all conversational AI models out there) can potentially produce highly sophisticated responses, it tends to do so only when the user provides a prompt that warrants it.

Model behavior is not fixed; it is designed

Although to me this part of the report lacks supporting data and from my personal experience I would tend to disagree, it suggests that this “mirroring” effect is not an inherent property of all language models, and that how a model responds depends heavily on how it is trained, fine-tuned, and instructed. Although as I say I disagree, I do see that one could imagine a system prompt that forces the model to always use simplified language, regardless of user input, or conversely one that always responds in highly technical prose. But this would need to be designed.

Claude appears to occupy a more dynamic middle ground. Rather than enforcing a fixed register, it adapts its level of sophistication to the user’s prompt. This design choice amplifies the importance of user skill. The model is capable of expert-level reasoning, but it treats the prompt as a signal for how much of that capacity to deploy.

It would really be great to see the other big players like OpenAI and Google running the same kinds of tests and analyses on their usage data.

AI as a multiplier, quantified

The “cliché” that “AI is an equalizer” is often repeated without evidence, and as I said above, Anthropic’s analysis provides exactly that… but negatively.

If output sophistication scales with input sophistication, then the model is not replacing human expertise (and not equalizing); however, it is multiplying it. And this is positive for users applying the AI system to their domains of expertise.

A weak base multiplied by a powerful tool remains weak, and in the best case you can use consultations with an AI system to get started in a field, provided you know enough to at least tell hallucinations from facts. A strong base, by contrast, benefits enormously because then you start with a lot and get even more; for example, I very often brainstorm with ChatGPT or better with Gemini 3 in AI studio about equations that describe physics phenomena, to finally get from the system pieces of code or even full apps to, say, fit data to very complex mathematical models. Yes, I could have done that, but by carefully drafting my prompts to the AI system it could get the job done in literally orders of magnitude less time than I would have.

All this framing might help to reconcile two seemingly contradictory narratives about AI. On the one hand, models are undeniably impressive and can outperform humans on many narrow tasks. On the other hand, they often disappoint when used naïvely. The difference is not primarily the prompt’s wording, but the user’s understanding of the domain, the problem structure, and the criteria for success.

Implications for education and work

One implication is that investments in human capital still matter, and a lot. As models become better mirrors of user sophistication, disparities in expertise may become more visible rather than less as the “equalization” narrative proposes. Those who can formulate precise, well-grounded prompts will extract far more value from the same underlying model than those who cannot.

This also reframes what “prompt engineering” should mean going forward. It is less about learning a new technical skill and more about cultivating traditional ones: domain knowledge, critical thinking, problem decomposition. Knowing what to ask and how to recognize a good answer turns out to be the real interface. This is all probably obvious to us readers of Towards Data Science, but we are here to learn and what Anthropic found in a quantitative way makes it all much more compelling.

Notably, to close, Anthropic’s data makes its points with unusual clarity. And again, we should call all big players like OpenAI, Google, Meta, etc. to run similar analyses on their usage data, and ask that they present the results to the public just like Anthropic did.

And just like we’ve been fighting for a long time for free widespread accessibility to conversational AI systems, clear guidelines to suppress misinformation and intentional improper use, ways to ideally eliminate or at least flag hallucinations, and more, we can now add pleas to achieve true equalization.

References and related reads

To know all about Anthropic’s report (which touches on many other interesting points too, and provides all details about the analyzed data): https://www.anthropic.com/research/anthropic-economic-index-january-2026-report

And you may also find interesting Microsoft’s “New Future of Work Report 2025”, against which Anthropic’s study makes some comparisons, available here: https://www.microsoft.com/en-us/research/project/the-new-future-of-work/

My previous post “Two New Papers By DeepMind Exemplify How Artificial Intelligence Can Help Human Intelligence”: https://pub.towardsai.net/two-new-papers-by-deepmind-exemplify-how-artificial-intelligence-can-help-human-intelligence-ae5143f07d49

My previous post “New DeepMind Work Unveils Supreme Prompt Seeds for Language Models”: https://medium.com/data-science/new-deepmind-work-unveils-supreme-prompt-seeds-for-language-models-e95fb7f4903c