of those decisions that seems straightforward until you have to measure it. A customer’s introductory rate expires, the invoice goes up, and you want to know whether the price change hurt retention. Simple enough in theory.

The problem is that something else is almost always happening at the same time. The initiative that drove the original purchase, whether it was a system migration, compliance push, sales transformation, or product launch, has wrapped up. The team that championed the tool has moved on to the next thing. And the product that once felt essential is quietly becoming a line item someone is going to question.

So when the customer churns, the account team says it is the price. The retention strategy team says the use case ran its course. Product says the platform never got past the original buyer. Everyone has a theory and a spreadsheet to back it up.

Which attribution you land on matters, not abstractly, but in terms of what you do next.

If the primary cause is…The business response is…Promo expiry (price shock)Extend discounting, redesign renewal packaging, adjust price ladderInitiative completion (value exhaustion)Invest in expansion use cases, trigger lifecycle retention plays, improve onboarding to recurring workflowsBoth forces interactTime renewal offers around new business moments; discount alone will not solve a value problem

Each method below builds a different counterfactual for the same event. Picking the right one is not the hard part. Knowing which question you are trying to answer before you open a notebook, that is where most of these analyses go sideways.

Define the question before the method

Before you touch the data, you need to decide what you are actually trying to estimate. The same churn event at renewal can produce three meaningfully different numbers depending on what you are asking:

- The promo-cohort effect. What was the average churn impact on customers whose introductory discount expired? The finance team usually wants this number because it lines up with how renewal revenue gets reported.

- The initiative-completion effect. What was the churn impact on customers whose original adoption use case had concluded by renewal? The retention strategy team wants this one because it speaks to whether the product achieved sticky value or just served a project.

- The joint effect and its interaction. What happened to customers who faced both at the same time, price increase and value exhaustion arriving together? This number is almost always larger than either force alone would predict, and it is usually the one that actually explains the churn spike.

These are not the same number and they do not answer the same question. Treating them as interchangeable is the most common mistake I see in renewal churn analyses, and it is usually what keeps the account team versus retention strategy debate going in circles.

The Setup

The synthetic dataset has 10,000 B2B customers observed around their renewal dates. Each has two flags: promo_expired (did their introductory rate end at renewal?) and initiative_complete (had the original use case concluded before renewal?). One thing worth flagging upfront: initiative_complete needs to be defined using pre-renewal signals, things like customer relationship management (CRM) milestones, implementation completion, or customer success health scores. If you infer it from declining usage after the fact, you will end up calling early churn behavior a cause of churn rather than a symptom of it. The true effects baked into the simulation:

- Baseline 6-month churn (neither force): 8%

- Promo expiry alone: +5 pp (13% churn)

- Initiative completion alone: +4 pp (12% churn)

- Both forces together: +14 pp (22% churn), a +5 pp interaction surplus above the additive expectation of 17%

import numpy as np

import pandas as pd

import statsmodels.formula.api as smf

RNG = np.random.default_rng(158) # seeded RNG for reproducibility

N = 10_000 # number of customers

# True effects baked into the data, what each method should recover.

TRUE_BASELINE = 0.08 # 8% baseline 6-month churn (neither force)

TRUE_PROMO = 0.05 # +5 pp from promo expiry alone

TRUE_INITIATIVE = 0.04 # +4 pp from initiative completion alone

TRUE_INTERACTION = 0.05 # +5 pp additional lift when BOTH forces hit

customers = pd.DataFrame({

‘customer_id’: np.arange(N),

‘promo_expired’: RNG.choice([0,1], N, p=[0.45, 0.55]),

‘initiative_complete’: RNG.choice([0,1], N, p=[0.50, 0.50]),

‘arr_usd’: RNG.lognormal(10.5, 0.8, N), # annual rev

‘tenure_months’: RNG.uniform(10, 14, N),

‘n_seats’: RNG.integers(5, 200, N), # seats sold

})

# Each customer’s churn probability = baseline + promo + init + interaction.

# The interaction term only fires when BOTH forces are active.

churn_prob = (

TRUE_BASELINE

+ TRUE_PROMO * customers[‘promo_expired’]

+ TRUE_INITIATIVE * customers[‘initiative_complete’]

+ TRUE_INTERACTION * customers[‘promo_expired’]

* customers[‘initiative_complete’]

)

customers[‘churned’] = (RNG.uniform(size=N) < churn_prob).astype(int)

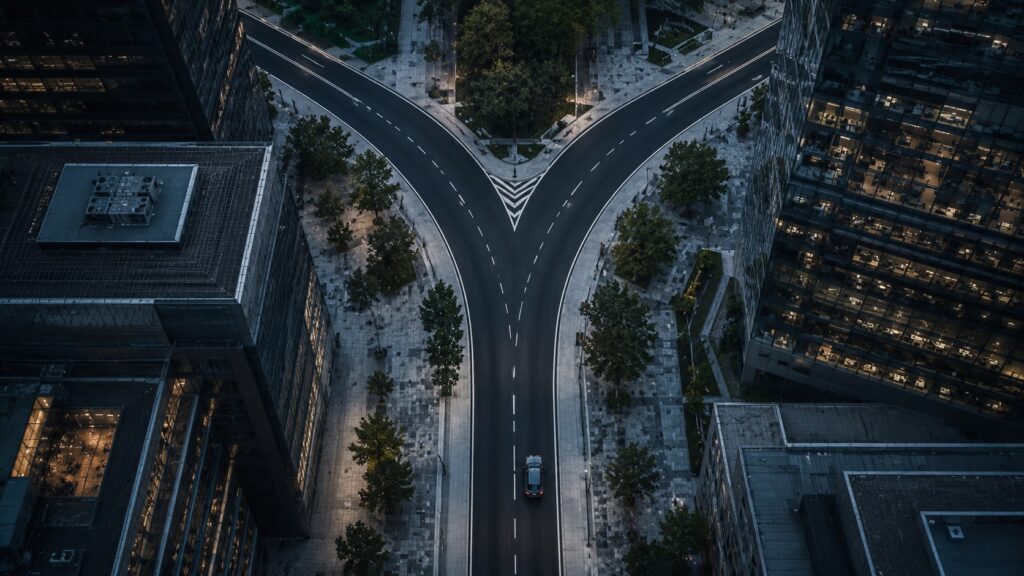

Churn rate by condition at renewal. The observed joint effect (22%) clears the additive expectation (17%) by 5 percentage points. The gap is the central fact of this analysis.

Image by Author

Method 1: Difference-in-Differences

Business question: What was the average churn impact of promo expiry on customers who actually faced a price increase at renewal, and does that impact differ depending on whether the use case had already concluded?

Method-specific estimand: Average treatment effect of promo expiry on the promo-expired cohort, with a triple interaction term to detect whether the price shock is amplified when initiative completion co-occurs.

Identifying assumption: Parallel trends. Absent promo expiry, the churn trajectory of expired and non-expired customers would have tracked each other within comparable initiative-completion groups around the renewal date.

To run this, you would aggregate customers into cohort-week cells around the renewal date, with each row representing a cohort’s weekly churn rate and the number of customers still at risk that week. The triple interaction lets the model detect whether the promo shock is amplified when the use case has also concluded:

# A ‘cohort’ is the (promo_expired, initiative_complete) cell: 4 cohorts.

# Each row in the panel is one cohort in one week, with that cohort’s

# weekly churn rate and the number of customers still at risk that week.

# week = 0 is the renewal date; negative weeks are pre-renewal.

panel = build_cohort_week_panel(customers) # long format: cohort x week

panel[‘post’] = (panel[‘week’] >= 0).astype(int) # 1 if post-renewal

panel[‘A’] = panel[‘promo_expired’] # rename for clarity

panel[‘B’] = panel[‘initiative_complete’]

# ‘post * A * B’ expands to: post, A, B, post:A, post:B, A:B, post:A:B.

# Weighting by at_risk gives bigger cohort-weeks more influence.

did_model = smf.wls(

‘churn_rate ~ post * A * B’,

data = panel,

weights = panel[‘at_risk’],

).fit(cov_type=’HC3′) # heteroskedasticity-robust standard errors

# Coefficients to read:

# post:A = promo shock when the initiative is still ongoing

# post:B = initiative shock when the promo has not expired

# post:A:B = additional churn when both forces hit in the same week

Event study: parallel trends diagnostic. Pre-renewal coefficients should sit near zero; the gap should open only after the renewal date. A slope in the pre-period usually means anticipation, customers reacting to the renewal quote before the official price change date.

Image by Author

A note on initiative_complete. It is not randomly assigned and it correlates with things that independently predict churn: customer size, how long the original buyer has been at the company, and product fit. Controlling for covariates helps, but what you cannot do is let the model define it. Measure it before the renewal decision using CRM or customer success milestones, not from usage patterns you observe after the customer has already started disengaging.

Failure mode: anticipation. Renewal quotes go out early. If customers start shopping alternatives the moment they see the new rate, the pre-period is already contaminated. Check the event-study plot before you trust the coefficient.

Reading the result. The triple interaction term, post:A:B, is what the setup is building toward. A positive coefficient there means the price shock bites harder when the use case has already faded. If you see that, a discounted renewal invoice will not fix it.

Method 2: Regression with interaction terms

Business question: What are the separate effects of price increase and project completion, and do they interact?

Method-specific estimand: Main effects and interaction coefficient from a regression that explicitly models both forces and their joint term.

Identifying assumption: No unmeasured confounders, adequate overlap across all four conditions, correctly specified functional form.

# Customer-level regression. Outcome: 1 if customer churned within 6 months.

# np.log1p(x) = log(1 + x); used to control for skewed dollar/count covariates

# (annual revenue, seat counts) so a few large customers do not dominate.

# The * operator below expands to: main effects of A and B AND their interaction.

interaction_model = smf.ols(

‘churned ~ promo_expired * initiative_complete’

‘ + np.log1p(arr_usd) + np.log1p(n_seats)’,

data=customers,

).fit(cov_type=’HC3′) # HC3 = heteroskedasticity-robust standard errors

# Coefficients (illustrative, matching simulation truth):

# promo_expired: +0.049 (b1, main effect of A)

# initiative_complete: +0.041 (b2, main effect of B)

# promo_expired:initiative_complete: +0.051 (b3, interaction A x B)

One thing that trips people up: b1 is not ‘the effect of promo expiry.’ It is the effect of promo expiry when initiative_complete equals zero. Once the initiative has also concluded, the marginal effect of promo expiry is b1 + b3, where b3 is the interaction coefficient. The full picture:

Effect of promo expiry, initiative ongoing: b1 = +0.049

Effect of promo expiry, initiative complete: b1 + b3 = +0.100

Effect of initiative completion, promo ongoing: b2 = +0.041

Effect of initiative completion, promo expired: b2 + b3 = +0.092

Interaction effect: incremental churn above baseline by condition. The additive expectation (+9 pp) is what you would predict if the two forces did not interact: +5 pp from promo expiry alone plus +4 pp from initiative completion alone. The actual joint effect (+14 pp) overshoots that prediction by 5 percentage points: the interaction surplus.

Image by Author

Failure mode: collinearity. If you sold a lot of customers into the same wave of transformation work, promo expiry and initiative completion will be correlated by construction. When that happens, b1, b2, and b3 get hard to separate and the standard errors will flag it. At that point, report the joint prediction for each cohort rather than trying to interpret the coefficients individually.

Reading the result. That interaction coefficient is as large as either main effect on its own. A customer facing both forces is not just extra at-risk, they are in a fundamentally different situation. That is what should drive the commercial response.

Method 3: Shapley value attribution

Business question: Given that both forces together caused 14 pp of incremental churn, how much of that should each force be accountable for, for the purposes of budget allocation and renewal strategy?

Method-specific estimand: Fair allocation of the joint churn impact across the two causal forces, using Shapley values from cooperative game theory.

Identifying assumption: The coalition value estimates v(S), where S is a subset of the drivers and v(S) is the incremental churn caused by that subset, are credible. They come from the regression or experiment above, not from a confounded model.

With just two drivers, Shapley is actually pretty intuitive. Each driver keeps its standalone contribution, and then the two split the interaction surplus evenly. Promo expiry gets its 5 pp plus half the 5 pp interaction. Initiative completion gets its 4 pp plus the other half. The code makes this concrete:

from itertools import permutations

import math

# A ‘coalition’ is any subset of drivers active together.

# v(S) = the incremental churn (in pp) caused by coalition S.

# These coalition values come from the interaction regression above:

v = {

frozenset(): 0, # neither driver active

frozenset([‘promo’]): 5, # promo expiry alone

frozenset([‘init’]): 4, # initiative completion alone

frozenset([‘promo’, ‘init’]): 14, # both, includes +5 pp interaction

}

# ‘players’ = the drivers we are allocating credit across.

# For each ordering of players, each player’s ‘marginal contribution’ is

# how much the coalition value grows when that player joins.

# Shapley value = average marginal contribution across all orderings.

def shapley_values(v, players):

n = len(players)

phi = {p: 0.0 for p in players} # accumulator for each player

for perm in permutations(players): # try every ordering

coalition = frozenset() # start with no drivers active

for player in perm:

# how much does the coalition grow when this player joins?

marginal = v[coalition | {player}] – v[coalition]

phi[player] += marginal

coalition = coalition | {player}

# average across all n! orderings

return {p: round(phi[p] / math.factorial(n), 2) for p in players}

print(shapley_values(v, [‘promo’, ‘init’]))

# {‘promo’: 7.5, ‘init’: 6.5} # sums to 14 pp, the full joint effect

Left: coalition value estimates for each subset of the two causal forces. Right: Shapley allocation. Promo expiry receives 7.5 pp of the credit, initiative completion receives 6.5 pp, summing to 14 pp.

Image by Author

The thing worth repeating here. Shapley is an allocation rule. It distributes credit fairly given the coalition values you feed it, but it cannot fix bad inputs. If your v(S) estimates come from a confounded regression, your Shapley shares are confounded too. The math is clean; the causal work still has to happen upstream.

Reading the result. A 7.5 to 6.5 split is not a signal to put 54% of your retention budget into pricing and 46% into customer success. It is a signal that you need both, and that the timing of the renewal offer matters as much as what is in it.

Choosing between the methods

There is no universally correct method here. The right choice depends on what question you are answering and what your data can actually support. In practice, I run more than one:

MethodEstimandAssumptionTradeoffsDiDAvg. effect on promo-expired cohortParallel trends around renewalClean cohorts and pre-period; breaks under anticipation or correlationRegression + InteractionMain effects + interaction termNo confounders; overlap across cellsQuantifies the interaction; breaks under collinearityShapley attributionFair allocation of joint impactCredible v(S) from aboveUseful for budget framing; unstable when v(S) is noisy

When the identification checks, the interaction model, and the attribution layer all point in the same direction, I am comfortable presenting the result. When they diverge, that is worth understanding before you bring anything to a stakeholder meeting. A sharp disagreement between methods is usually telling you something about which assumption is not holding.

Translating the effect into revenue and LTV

Getting a churn coefficient is not the same as getting a pricing recommendation. The same churn increase can still be net positive if the price lift is large enough. You have to propagate it forward before you know whether the change actually worked.

# LTV = expected revenue per customer over a fixed horizon (in months).

# survival[m] = probability the customer is still subscribed in month m.

# Multiply by monthly MRR and sum: undiscounted 2-year LTV.

def ltv(monthly_churn, monthly_mrr, horizon=24):

months = np.arange(horizon)

survival = (1 – monthly_churn) ** months

return (survival * monthly_mrr).sum()

# Convert 6-month churn rates into monthly churn rates.

# (1 – p)^(1/6) is the monthly survival rate; subtracting from 1 gives monthly churn.

baseline_monthly = 1 – (1 – 0.08) ** (1/6) # 0.0138 monthly churn

treated_monthly = 1 – (1 – 0.22) ** (1/6) # 0.0406 monthly churn

old_mrr = 1_000 # pre-renewal monthly recurring revenue (MRR)

new_mrr = 1_130 # post-renewal MRR (+13% price increase)

baseline_ltv = ltv(baseline_monthly, old_mrr) # $20,550

treated_ltv = ltv(treated_monthly, new_mrr) # $17,546

# Net 2-year LTV change per customer: -$3,004

# The 13% price increase does not offset the accelerated churn.

# Breakeven: what new MRR would restore the baseline 2-year LTV?

price_grid = np.linspace(1_000, 1_600, 1_000)

ltv_grid = [ltv(treated_monthly, p) for p in price_grid]

breakeven = price_grid[np.searchsorted(ltv_grid, baseline_ltv)]

# Breakeven MRR: ~$1,324 (a 32% increase, not the 13% that shipped)

Reading the result. In this scenario, the 13% price increase pays for itself in the quarter it ships but eats through medium-term customer value. To break even on 2-year LTV at the same churn rate, you would need to be charging roughly $1,324, a 32% increase rather than the 13% that went out. That is not a gap you close with a different price point. The underlying use-case problem has to be addressed first.

The decomposition is the deliverable. The causal estimate is just the input.

A few closing pitfalls

- Correlated assignment. The land-and-expand sales motion creates a natural correlation between promo expiry and initiative completion. You sold the customer on a big initiative and gave them a year-one deal to get them moving. Now both things are expiring at the same time by design. Cross-sectional variation alone will not untangle them. You need timing variation, comparison cohorts, or an eligibility cutoff.

- Anticipation. The pre-period only stays clean if customers don’t react to the renewal quote before the official price-change date. When they do, the parallel-trends assumption breaks before the treatment even fires, and the DiD coefficient picks up the early reaction rather than the price shock itself. The event-study plot is your first line of defense; a slope in the pre-period weeks is the tell.

- Estimand drift. The most common mistake I see in renewal churn reads is bringing the wrong number to the meeting. The promo-cohort average effect, the interaction coefficient, and the Shapley allocation are three different answers to three different questions. Know which one you are presenting and why.

- Attribution without a decision. Shapley gets you to an allocation. It does not get you to a plan. A 54/46 split between price and use-case exhaustion is useful context for a conversation, not a budget instruction. Someone still has to decide what the actual retention intervention is.

All code in this article runs end to end on the synthetic dataset. The full notebook with the cohort-week panel construction, diagnostic plots, and sensitivity checks is on GitHub and runnable directly in Colab.

Staff Data Scientist focused on causal inference, experimentation, and decision science. I write about turning ambiguous business questions into decision-ready analysis.

More like this on LinkedIn 👇