if proxy_alive():

print(“\n[10] Mixed 10-prompt workload…”)

workload = [

“Capital of France?”,

“Read foo.py”,

“Type hint for a list of dicts”,

“Lowercase: HELLO”,

“One-sentence summary of REST”,

“Refactor a callback chain into async/await with proper error handling”,

“Design a sharded multi-region key-value store with linearizable reads”,

“Analyze the asymptotic complexity of this code and prove the bound rigorously”,

“Debug why our gRPC stream stalls when the client TCP window saturates”,

“Compare and contrast B-trees and LSM-trees for write-heavy workloads”,

]

runs = []

client = OpenAI(base_url=f”http://localhost:{PORT}/v1″, api_key=”local”)

for p in workload:

t0 = time.time()

try:

r = client.chat.completions.create(

model=”auto”,

messages=[{“role”: “user”, “content”: p}],

max_tokens=140,

)

usage = getattr(r, “usage”, None)

runs.append({

“prompt”: p[:55],

“model”: r.model,

“latency_s”: round(time.time() – t0, 2),

“in_tok”: getattr(usage, “prompt_tokens”, 0) if usage else 0,

“out_tok”: getattr(usage, “completion_tokens”, 0) if usage else 0,

})

except Exception as e:

runs.append({“prompt”: p[:55], “model”: “ERROR”,

“latency_s”: None, “in_tok”: 0, “out_tok”: 0,

“error”: str(e)[:80]})

rdf = pd.DataFrame(runs)

print(rdf.to_string(index=False))

PRICE = {

“flash”: {“in”: 0.30 / 1e6, “out”: 2.50 / 1e6},

“pro”: {“in”: 1.25 / 1e6, “out”: 10.0 / 1e6},

}

def price_for(model_str, in_t, out_t):

m = (model_str or “”).lower()

tier = “flash” if “flash” in m else “pro”

return in_t * PRICE[tier][“in”] + out_t * PRICE[tier][“out”]

cost_routed = sum(price_for(r[“model”], r[“in_tok”], r[“out_tok”]) for r in runs)

cost_no_route = sum(price_for(“gemini-2.5-pro”, r[“in_tok”], r[“out_tok”]) for r in runs)

print(f”\n[10] Cost (NadirClaw routed) : ${cost_routed:.6f}”)

print(f” Cost (always-Pro baseline) : ${cost_no_route:.6f}”)

if cost_no_route > 0:

print(f” Estimated savings on this run : ”

f”{(1 – cost_routed/cost_no_route) * 100:.1f}%”)

print(“\n[11] `nadirclaw report` (parses the JSONL request log):”)

rep = subprocess.run([“nadirclaw”, “report”], capture_output=True, text=True, timeout=60)

print(rep.stdout or rep.stderr)

if proxy_alive():

print(“\n[12] Stopping the proxy…”)

try:

if hasattr(os, “killpg”):

os.killpg(os.getpgid(server_proc.pid), signal.SIGTERM)

else:

server_proc.terminate()

server_proc.wait(timeout=10)

except Exception:

try:

server_proc.kill()

except Exception:

pass

print(” ✓ proxy stopped.”)

print(“\nDone. 🎉”)

Trending

- My Insurance Won’t Cover GLP-1 Drugs. What Now?

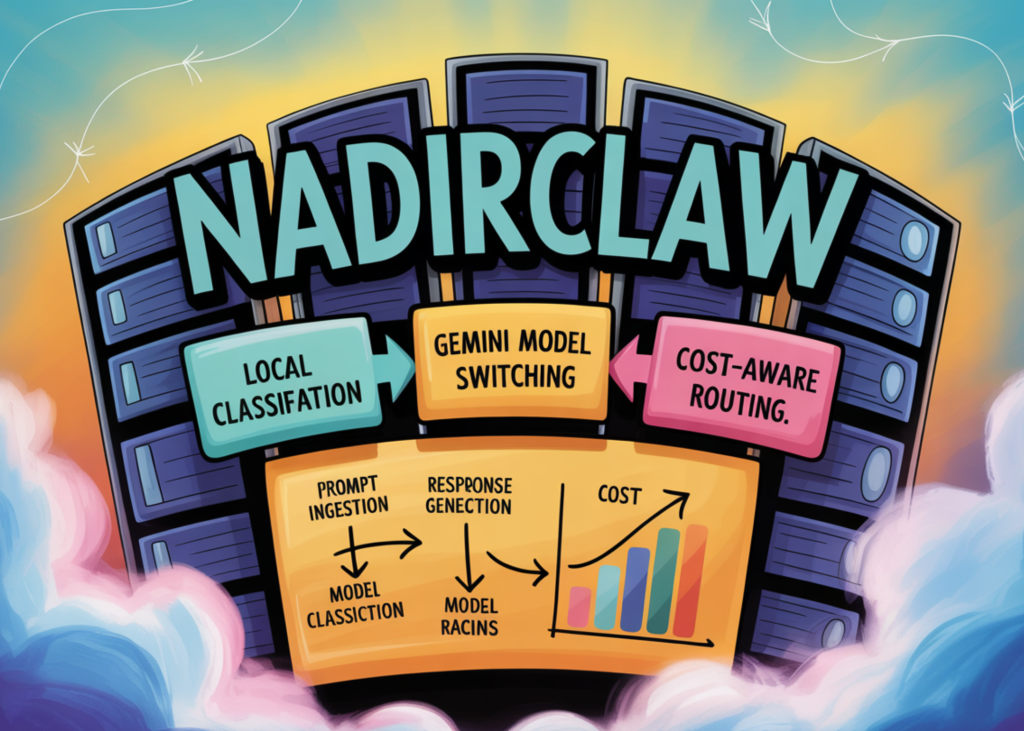

- How to Build a Cost-Aware LLM Routing System with NadirClaw Using Local Prompt Classification and Gemini Model Switching

- French national shows symptoms on return from hantavirus-hit ship

- Batch or Stream? The Eternal Data Processing Dilemma

- OpenClaw vs Hermes Agent: Why Nous Research’s Self-Improving Agent Now Leads OpenRouter’s Global Rankings

- LLM Summarizers Skip the Identification Step

- NVIDIA AI Just Released cuda-oxide: An Experimental Rust-to-CUDA Compiler Backend that Compiles SIMT GPU Kernels Directly to PTX

- NVIDIA AI Releases Star Elastic: One Checkpoint that Contains 30B, 23B, and 12B Reasoning Models with Zero-Shot Slicing

Next Article My Insurance Won’t Cover GLP-1 Drugs. What Now?

Related Posts

Add A Comment

Subscribe to Updates

Get the latest creative news from FooBar about art, design and business.

© 2026 insureai360. Designed by Pro.