model fails not because the algorithm is weak, but because the variables were not prepared in a way the model can properly understand?

In credit risk modeling, we often focus on model choice, performance metrics, feature selection, or validation. But before estimating any coefficient, another question deserves attention: how should each variable enter the model?

A raw variable is not always the best representation of risk.

A continuous variable may have a non-linear relationship with default. A categorical variable may contain too many modalities. Some variables may include outliers, missing values, unstable distributions, or categories with very few observations. If these issues are ignored, the model may become unstable, difficult to interpret, and less reliable in production.

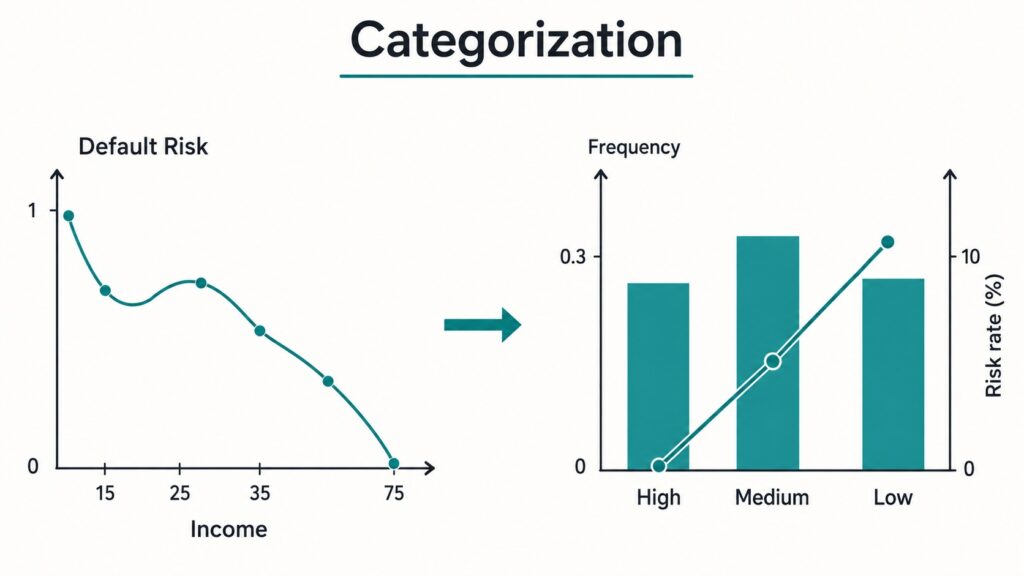

This is where categorization becomes important.

Categorization, also called coarse classification, grouping, classing, or binning, consists of transforming raw variable values into a smaller number of meaningful groups. In credit scoring, these groups are not created only for convenience. They are created to make the relationship between the variable and default risk clearer, more stable, and easier to use in a model.

This step is particularly useful when the final model is a logistic regression, which remains widely used in credit scoring because it is transparent, interpretable, and easy to translate into a scorecard.

For categorical variables, categorization helps reduce the number of modalities. For continuous variables, it helps capture non-linear risk patterns, reduce the impact of outliers, handle missing values, improve interpretability, and prepare the variables for Weight of Evidence transformation.

In this article, we will study why categorization is an essential step in credit scoring and how it can be used to transform raw variables into stable risk classes.

In Section 1, we explain why categorization is useful for both categorical and continuous variables, especially in the context of logistic regression.

In Section 2, we show how to analyze the relationship between continuous variables and default risk using graphical monotonicity analysis.

In Section 3, we introduce the main categorization methods, including equal-interval binning, equal-frequency binning, Chi-square-based grouping, and Weight of Evidence-based grouping.

Finally, in Section 4, we focus on the discretization of continuous variables using Weight of Evidence and show how this approach helps prepare variables for an interpretable credit scoring model.

1. Why categorization is important in credit scoring

When building a credit scoring model, variables can be either categorical or continuous.

Categorization can be useful for both types of variables, but the motivation is not the same.

For categorical variables, the main objective is often to reduce the number of modalities and group categories with similar risk behavior.

For continuous variables, the objective is usually to transform a raw numerical scale into a smaller number of ordered risk classes.

In both cases, the goal is the same: create variables that are statistically meaningful, economically interpretable, and stable over time.

1.1 Categorization Reduces Dimensionality

Let us start with categorical variables.

Suppose we have a variable calledindustry_sector, and this variable has 50 different values.

If we use this variable directly in a logistic regression model, we need to create dummy variables.

Because of collinearity, one category must be used as the reference category. Therefore, for 50 categories, we need:

50−1=49 dummy variables.

That means the model must estimate 49 parameters for only one variable.

This can quickly become a problem.

A categorical variable with too many modalities may lead to unstable coefficients, overfitting, poor robustness, difficulty in interpretation, and higher complexity during monitoring.

By grouping similar categories together, we reduce the number of parameters that must be estimated.

For example, instead of keeping 50 industry sectors, we may group them into 5 or 6 risk classes. These groups may be based on observed default rates, business expertise, sample size constraints, or a combination of these criteria.

The result is a model that is more compact, more stable, and easier to interpret.

So, one of the first benefits of categorization is dimension reduction.

1. 2. Categorization Helps Capture Non-Linear Risk Patterns

For continuous variables, categorization can also be very useful.

But before deciding whether to categorize a continuous variable, we should first understand its relationship with default risk.

A very simple way to do this is to plot the default rate against the variable.

For example, if we have a continuous variable such asperson income variable, we can divide it into several intervals and calculate the default rate in each interval.

Then, we plot:

- the binned values of the variable on the x-axis,

- the default rate on the y-axis.

This allows us to visually inspect the risk pattern.

If the relationship is monotonic, then the variable already has a clear risk direction.

For example:

- As income increases, default rate decreases.

- As the loan interest rate increases, the default rate increases.

In this case, the relationship is easy to understand.

However, if the relationship is non-monotonic, the situation becomes more complex.

Suppose default risk decreases for low to medium income levels, but then increases again for very high income levels. A simple logistic regression model may not capture this pattern properly because it estimates a linear effect between the variable and the log-odds of default.

The logistic regression model has the following form:

log(P(Y=1|X)1−P(Y=1|X))=β0+β1X\log \left( \frac{P(Y = 1 \mid X)}{1 – P(Y = 1 \mid X)} \right) = \beta_0 + \beta_1 X

where Y=1 represents default, and X is an explanatory variable.

This equation means that the model assumes a linear relationship between X and the log-odds of default.

If the effect of X is not linear, the model may miss an important part of the risk structure.

Non-linear models such as neural networks, decision trees, gradient boosting, or support vector machines can naturally capture complex relationships.

But in credit scoring, logistic regression is still widely used because it is simple, transparent, and easy to explain.

By categorizing continuous variables into risk groups, we can introduce part of the non-linearity into a linear model.

That is one of the most important reasons why binning is so common in scorecard modeling.

1.3. Categorization Reduces the Impact of Outliers

Another important benefit of categorization is outlier management.

Continuous variables often contain extreme values.

For example:

- very high income,

- extremely large loan amounts,

- unusual employment length,

- abnormal credit utilization ratios.

If these values are used directly in a logistic regression, they can have a strong influence on the estimated coefficients.

When we categorize the variable, outliers are assigned to a specific bin.

For example, all income values above a certain threshold can be grouped into the same category.

This reduces the influence of extreme observations and makes the model more robust.

Instead of allowing an extreme value to strongly affect the model, we only use the risk information contained in its group.

1.4. Categorization Helps Deal with Missing Values

Missing values are very common in credit scoring datasets.

A customer may not provide income information.

An employment length may be missing.

A credit history variable may not be available.

One way to handle missing values is to create a dedicated category for them.

This allows the model to learn the specific behavior of individuals with missing values.

This is very important because missingness is not always random.

In credit scoring, a missing value may itself contain risk information.

For example, customers who do not report their income may have a different default behavior compared with customers who provide it.

By creating a missing category, we allow the model to capture this behavior.

1.5 Categorization Improves Interpretability

Interpretability is one of the most important requirements in credit scoring.

A credit scoring model is not just a black-box prediction engine.

It is often used by:

- risk analysts,

- credit officers,

- model validation teams,

- regulators,

- business decision-makers.

When variables are categorized, the model becomes much easier to explain.

For example, instead of saying:

A one-unit increase in loan interest rate increases the log-odds of default by a certain amount.

We can say:

Customers with an interest rate above 15% have significantly higher default risk than customers with an interest rate below 10%.

This interpretation is more intuitive.

It is also easier to translate into scorecard points.

1.6. Categorization Improves Model Stability

A good credit scoring model should not only perform well during development.

It should also remain stable in production.

Categorization helps make variables less sensitive to small changes in the data.

For example, if a customer’s income changes slightly from 2990 to 3010, the raw numerical value changes.

But if both values belong to the same income band, the categorized value remains the same.

This makes the model more stable over time.

Categorization is also very useful for monitoring.

Once variables are grouped into classes, we can easily track their distribution in production and compare it with the development sample using indicators such as the Population Stability Index.

To summarize this first part, we categorize variables mainly to reduce dimensionality, capture non-linear risk patterns, handle missing values and outliers, improve interpretability, and stability.

2. Graphical Monotonicity Analysis Before Binning

Before categorizing a continuous variable, we need to understand its relationship with the default rate.

This step is important because categorization should not be arbitrary.

The goal is not only to create bins. The goal is to create bins that make sense from a risk perspective.

A good binning should answer the following questions:

- Does the variable have a clear relationship with default risk?

- Is the relationship increasing or decreasing?

- Is the relationship monotonic or non-monotonic?

To answer these questions, we start with a graphical monotonicity analysis.

A variable is monotonic with respect to default risk if the default rate moves in one direction when the variable increases.

For example, if income increases and default risk decreases, the relationship is monotonic decreasing.

If interest rate increases and default risk increases, the relationship is monotonic increasing.

Monotonicity is important in credit scoring because it makes the model easier to interpret.

A monotonic variable has a clear risk meaning.

For example:

- Higher income means lower risk.

- Higher loan burden means higher risk.

- A higher interest rate means higher risk.

- Longer employment length means lower risk.

These relationships are easy to explain and usually consistent with business intuition.

However, if the relationship is not monotonic, the variable may require more careful treatment.

A non-monotonic pattern can indicate:

- a real non-linear risk effect,

- noisy data,

- sparse intervals,

- outliers,

- interactions with other variables,

- instability across datasets.

This is why we should always inspect the default rate curve before deciding how to bin a variable.

2.1 Equal-Interval Binning for Visual Diagnosis

A simple first approach consists of dividing the variable into intervals of equal width. This is called equal-interval binning.

Suppose a variable takes the following values:

1000, 1200, 1300, 1400, 1800, 2000

The minimum value is 1000, and the maximum value is 2000.

If we want to create two equal-width bins, the width is:

2000–10002=500\frac{2000–1000}{2} = 500

So we obtain:

Bin 1: 1000 to 1500

Bin 2: 1500 to 2000

Then, for each bin, we calculate the default rate:

This gives us a table like this:

Then we plot the default rate by bin.

This plot gives a first intuition about the shape of the relationship.

Equal-interval binning is simple and easy to understand. However, it may create bins with very different numbers of observations, especially when the variable is highly skewed.

For this reason, equal-frequency binning is often preferred for exploratory monotonicity analysis.

2.2 Equal-Frequency Binning for Risk Curves

Equal-frequency binning divides the variable into bins containing approximately the same number of observations.

For example, decile binning divides the sample into 10 groups, each containing around 10% of the observations.

This approach is useful because each bin has enough data to calculate a more reliable default rate.

In Python, this can be done with pd.qcut.

However, it is important to note the difference:

- pd.cut performs equal-width binning;

- pd.qcut performs equal-frequency binning.

This distinction matters because the interpretation of the bins is not the same.

In our case, we use equal-frequency binning to study the risk pattern of continuous variables.

2.3 Dataset and Selected Variables

In previous articles, we performed several important steps on the same dataset.

We already covered:

- exploratory data analysis,

- variable preselection,

- stability analysis,

- monotonicity analysis over time,

- Comparison between train, test, and out-of-time datasets.

After these steps, we selected the most relevant variables for modeling.

In this article, we focus on the categorization of continuous variables. The qualitative variables already had a limited number of modalities, and based on the previous analysis, their stability and monotonicity were acceptable.

Therefore, our objective here is to study the continuous variables graphically, understand their relationship with default risk, and define an appropriate discretization strategy.

The selected continuous variables are:

- person_income

- person_emp_length

- loan_int_rate

- loan_percent_income

2.4 Python Code for Default Rate Curves

There is no native Python function in pandas or scikit-learn that performs a full credit-scoring monotonicity diagnosis exactly as required for scorecard modeling.

So we need either to code the procedure ourselves or use a specialized scorecard library.

Here, we code it manually with pandas and matplotlib.

import pandas as pd

import matplotlib.pyplot as plt

def plot_default_rate_ax(data, variable, target, bins=10, ax=None):

“””

Plot default rate by binned numerical variable on a given matplotlib axis.

“””

df = data[[variable, target]].copy()

# Create bins

df[f”{variable}_bin”] = pd.qcut(

df[variable],

q=bins,

duplicates=”drop”

)

# Compute default rate by bin

summary = (

df.groupby(f”{variable}_bin”, observed=True)[target]

.mean()

.reset_index()

)

# Convert intervals to strings for plotting

summary[f”{variable}_bin”] = summary[f”{variable}_bin”].astype(str)

# Plot

ax.plot(

summary[f”{variable}_bin”],

summary[target],

marker=”o”

)

ax.set_title(f”Default rate by {variable}”)

ax.set_xlabel(variable)

ax.set_ylabel(“Default rate”)

ax.tick_params(axis=”x”, rotation=45)

return ax

variables = [

“person_income”,

“person_emp_length”,

“loan_int_rate”,

“loan_percent_income”

]

fig, axes = plt.subplots(2, 2, figsize=(16, 10))

axes = axes.flatten()

for ax, variable in zip(axes, variables):

plot_default_rate_ax(

train_imputed,

variable=variable,

target=”def”,

bins=10,

ax=ax

)

plt.tight_layout()

plt.show()

After plotting the default rate curves, we can analyze the risk direction of each variable.

For person_income,we generally expect the default rate to decrease when income increases.

This makes sense because customers with higher income usually have more repayment capacity.

For person_emp_length, we also expect the default rate to decrease when employment length increases.

A longer employment history may indicate more professional stability.

For loan_int_rate, we expect the default rate to increase when the interest rate increases.

This is coherent because higher interest rates are often associated with riskier borrowers.

For loan_percent_income, we expect the default rate to increase when the loan amount becomes larger relative to income.

This variable measures the burden of the loan compared with the borrower’s income. A higher value usually means more repayment pressure.

If the observed curves confirm these expectations, then the variables are coherent from a business perspective.

In our case, the graphical analysis shows that the selected variables have meaningful monotonic patterns.

The default rate decreases when person_income and person_emp_length increase. On the other hand, the default rate increases when loan_int_rate and loan_percent_income increase.

This is exactly what we expect in credit risk modeling.

3. Main Categorization Methods

Once we understand the relationship between each continuous variable and the default rate, we can define a categorization strategy.

There are many ways to categorize a variable.

Some methods are simple and unsupervised. They do not use the target variable:

- equal-interval binning,

- equal-frequency binning,

Others are supervised. They use the default variable to create risk-based groups:

- Chi-square-based grouping,

- Weight of Evidence-based grouping.

In credit scoring, supervised methods are often preferred because the goal is not only to divide the variable into intervals. The goal is to create intervals that are meaningful in terms of default risk.

In this section, we present in more detail the two supervised methods.

3.1 Chi-Square-Based Grouping

It is a supervised binning method. The idea is simple. We start with many initial bins. Then we compare adjacent bins. If two adjacent bins have similar default behavior, we merge them.

For two adjacent bins i and j, we build a contingency table:

Then we apply a Chi-square test.

The Chi-square statistic is:

χ2=∑(O−E)2E\chi^2 = \sum \frac{(O – E)^2}{E}

where:

- O is the observed frequency,

- E is the expected frequency under independence.

The null hypothesis is:

H0:The two bins have the same default distribution.

The alternative hypothesis is:

H1:The two bins have different default distributions.

If the two bins have similar default behavior, we can merge them.

The procedure is repeated until fewer stable classes are obtained.

The advantage of this method is that it uses the default variable directly.

The final groups are therefore more aligned with risk.

However, the method must be used carefully.

With very large samples, small differences may become statistically significant. With very small samples, the test may not be reliable.

This is why statistical binning must always be combined with business judgment.

3.2 Weight of Evidence-Based Grouping

Another very common method in credit scoring is based on Weight of Evidence, also called WoE. WoE measures the relative distribution of events and non-events in each category.

In this article, we define:

- Bad = default (def = 1) = Events

- Good = non-default (def = 0) = Non Events

For a given category i, the WoE is defined as:

WoE=ln(%Events%NonEvents)WoE = \ln \left( \frac{\%Events}{\%NonEvents} \right)

With this convention:

- Positive WoE means higher event/default concentration;

- Negative WoE means higher non-event/good concentration.

- WoE close to zero, the bin has a risk level close to the average population.

WoE-based grouping consists of merging adjacent bins with similar WoE values. The objective is to create stable groups with a clear risk order.

In practice, the procedure usually starts by cutting continuous variables into initial fine bins, often using equal-frequency intervals. Then, adjacent intervals are progressively merged when their WoE values are close or when one of them does not bring enough risk differentiation.

The idea is not only to reduce the number of classes. The idea is to create classes that bring useful risk information.

For example, if a bin has a WoE very close to zero, it may not provide strong discrimination. In that case, it can sometimes be merged with an adjacent bin, provided that the merge remains coherent from a business and risk perspective.

To maximize risk differentiation between final classes, it is also useful to check that the default rates are sufficiently separated. A practical rule is to keep a relative difference of at least 30% in risk between adjacent classes, while ensuring that each final class contains at least 1% of the population.

These thresholds should not be applied mechanically, but they provide useful safeguards:

- avoid creating classes that are too small;

- avoid keeping classes with almost identical risk levels;

- avoid overfitting the development sample;

- keep the final grouping interpretable and stable.

This method is especially useful when the final model is a logistic regression, because WoE-transformed variables are well aligned with the log-odds structure of the model.

4. Python Implementation of WoE-Based Categorization

We now move to the Python implementation.

The objective is to build a simple and transparent framework to analyze binned variables and support the final categorization decision.

We need three main tools.

The first tool computes the WoE for a variable given a predefined number of bins.

The second tool summarizes the number of observations and the default rate for each discretized class.

The third tool analyzes the evolution of the default rate by class over time. This will help us assess both monotonicity and stability.

This is important because a binning is not good only because it works on the training sample. It must also remain stable over time and across modeling datasets such as train, test, and out-of-time samples.

In other words, a good categorization must satisfy three conditions:

- It must be statistically meaningful;

- It must be coherent from a credit risk perspective.

- It must be stable over time.

def iv_woe(data, target, bins=5, show_woe=False, epsilon=1e-16):

“””

Compute the Information Value (IV) and Weight of Evidence (WoE)

for all explanatory variables in a dataset.

Numerical variables with more than 10 unique values are first discretized

into quantile-based bins. Categorical variables and numerical variables

with few unique values are used as they are.

Parameters

———-

data : pandas DataFrame

Input dataset containing the explanatory variables and the target.

target : str

Name of the binary target variable.

The target should be coded as 1 for event/default and 0 for non-event/non-default.

bins : int, default=5

Number of quantile bins used to discretize continuous variables.

show_woe : bool, default=False

If True, display the detailed WoE table for each variable.

epsilon : float, default=1e-16

Small value used to avoid division by zero and log(0).

Returns

——-

newDF : pandas DataFrame

Summary table containing the Information Value of each variable.

woeDF : pandas DataFrame

Detailed WoE table for all variables and all groups.

“””

# Initialize output DataFrames

newDF = pd.DataFrame()

woeDF = pd.DataFrame()

# Get all column names

cols = data.columns

# Run WoE and IV calculation on all explanatory variables

for ivars in cols[~cols.isin([target])]:

# If the variable is numerical and has many unique values,

# discretize it into quantile-based bins

if (data[ivars].dtype.kind in “bifc”) and (len(np.unique(data[ivars].dropna())) > 10):

binned_x = pd.qcut(

data[ivars],

bins,

duplicates=”drop”

)

d0 = pd.DataFrame({

“x”: binned_x,

“y”: data[target]

})

# Otherwise, use the variable as it is

else:

d0 = pd.DataFrame({

“x”: data[ivars],

“y”: data[target]

})

# Compute the number of observations and events in each group

d = (

d0.groupby(“x”, as_index=False, observed=True)

.agg({“y”: [“count”, “sum”]})

)

# Rename columns

d.columns = [“Cutoff”, “N”, “Events”]

# Compute the percentage of events in each group

d[“% of Events”] = (

np.maximum(d[“Events”], epsilon)

/ (d[“Events”].sum() + epsilon)

)

# Compute the number of non-events in each group

d[“Non-Events”] = d[“N”] – d[“Events”]

# Compute the percentage of non-events in each group

d[“% of Non-Events”] = (

np.maximum(d[“Non-Events”], epsilon)

/ (d[“Non-Events”].sum() + epsilon)

)

# Compute Weight of Evidence

# Here, WoE is defined as log(%Events / %Non-Events)

# With this convention, positive WoE indicates higher default/event risk

d[“WoE”] = np.log(

d[“% of Events”] / d[“% of Non-Events”]

)

# Compute the IV contribution of each group

d[“IV”] = d[“WoE”] * (

d[“% of Events”] – d[“% of Non-Events”]

)

# Add the variable name to the detailed table

d.insert(

loc=0,

column=”Variable”,

value=ivars

)

# Print the global Information Value of the variable

print(“=” * 30 + “\n”)

print(

“Information Value of variable ”

+ ivars

+ ” is ”

+ str(round(d[“IV”].sum(), 6))

)

# Store the global IV of the variable

temp = pd.DataFrame(

{

“Variable”: [ivars],

“IV”: [d[“IV”].sum()]

},

columns=[“Variable”, “IV”]

)

newDF = pd.concat([newDF, temp], axis=0)

woeDF = pd.concat([woeDF, d], axis=0)

# Display the detailed WoE table if requested

if show_woe:

print(d)

return newDF, woeDF

def tx_rsq_par_var(df, categ_vars, date, target, cols=2, sharey=False):

“””

Generate a grid of line charts showing the average event rate by category over time

for a list of categorical variables.

Parameters

———-

df : pandas DataFrame

Input dataset.

categ_vars : list of str

List of categorical variables to analyze.

date : str

Name of the date or time-period column.

target : str

Name of the binary target variable.

The target should be coded as 1 for event/default and 0 otherwise.

cols : int, default=2

Number of columns in the subplot grid.

sharey : bool, default=False

Whether all subplots should share the same y-axis scale.

Returns

——-

None

The function displays the plots directly.

“””

# Work on a copy to avoid modifying the original DataFrame

df = df.copy()

# Check whether all required columns are present in the DataFrame

missing_cols = [col for col in [date] + categ_vars if col not in df.columns]

if missing_cols:

raise KeyError(

f”The following columns are missing from the DataFrame: {missing_cols}”

)

# Remove rows with missing values in the date column or categorical variables

df = df.dropna(subset=[date] + categ_vars)

# Determine the number of variables and the required number of subplot rows

num_vars = len(categ_vars)

rows = math.ceil(num_vars / cols)

# Create the subplot grid

fig, axes = plt.subplots(

rows,

cols,

figsize=(cols * 6, rows * 4),

sharex=False,

sharey=sharey

)

# Flatten the axes array to make iteration easier

axes = axes.flatten()

# Loop over each categorical variable and create one plot per variable

for i, categ_var in enumerate(categ_vars):

# Compute the average target value by date and category

df_times_series = (

df.groupby([date, categ_var])[target]

.mean()

.reset_index()

)

# Reshape the data so that each category becomes one line in the plot

df_pivot = df_times_series.pivot(

index=date,

columns=categ_var,

values=target

)

# Select the axis corresponding to the current variable

ax = axes[i]

# Plot one line per category

for category in df_pivot.columns:

ax.plot(

df_pivot.index,

df_pivotData Science,

label=str(category).strip()

)

# Set chart title and axis labels

ax.set_title(f”{categ_var.strip()}”)

ax.set_xlabel(“Date”)

ax.set_ylabel(“Default rate (%)”)

# Adjust the legend depending on the number of categories

if len(df_pivot.columns) > 10:

ax.legend(

title=”Categories”,

fontsize=”x-small”,

loc=”upper left”,

ncol=2

)

else:

ax.legend(

title=”Categories”,

fontsize=”small”,

loc=”upper left”

)

# Remove unused subplot axes when the grid is larger than the number of variables

for j in range(i + 1, len(axes)):

fig.delaxes(axes[j])

# Add a global title to the figure

fig.suptitle(

“Default Rate by Categorical Variable”,

fontsize=10,

x=0.5,

y=1.02,

ha=”center”

)

# Adjust layout to avoid overlapping elements

plt.tight_layout()

# Display the final figure

plt.show()

def combined_barplot_lineplot(df, cat_vars, cible, cols=2):

“””

Generate a grid of combined bar plots and line plots for a list of categorical variables.

For each categorical variable:

– the bar plot shows the relative frequency of each category;

– the line plot shows the average target rate for each category.

Parameters

———-

df : pandas DataFrame

Input dataset.

cat_vars : list of str

List of categorical variables to analyze.

cible : str

Name of the binary target variable.

The target should be coded as 1 for event/default and 0 otherwise.

cols : int, default=2

Number of columns in the subplot grid.

Returns

——-

None

The function displays the plots directly.

“””

# Count the number of categorical variables to plot

num_vars = len(cat_vars)

# Compute the number of rows needed for the subplot grid

rows = math.ceil(num_vars / cols)

# Create the subplot grid

fig, axes = plt.subplots(

rows,

cols,

figsize=(cols * 6, rows * 4)

)

# Flatten the axes array to make iteration easier

axes = axes.flatten()

# Loop over each categorical variable

for i, cat_col in enumerate(cat_vars):

# Select the current subplot axis for the bar plot

ax1 = axes[i]

# Convert categorical dtype variables to string if needed

# This avoids plotting issues with categorical intervals or ordered categories

if pd.api.types.is_categorical_dtype(df[cat_col]):

df[cat_col] = df[cat_col].astype(str)

# Compute the average target rate by category

tx_rsq = (

df.groupby([cat_col])[cible]

.mean()

.reset_index()

)

# Compute the relative frequency of each category

effectifs = (

df[cat_col]

.value_counts(normalize=True)

.reset_index()

)

# Rename columns for clarity

effectifs.columns = [cat_col, “count”]

# Merge category frequencies with target rates

merged_data = (

effectifs

.merge(tx_rsq, on=cat_col)

.sort_values(by=cible, ascending=True)

)

# Create a secondary y-axis for the line plot

ax2 = ax1.twinx()

# Plot category frequencies as bars

sns.barplot(

data=merged_data,

x=cat_col,

y=”count”,

color=”grey”,

ax=ax1

)

# Plot the average target rate as a line

sns.lineplot(

data=merged_data,

x=cat_col,

y=cible,

color=”red”,

marker=”o”,

ax=ax2

)

# Set the subplot title and axis labels

ax1.set_title(f”{cat_col}”)

ax1.set_xlabel(“”)

ax1.set_ylabel(“Category frequency”)

ax2.set_ylabel(“Risk rate (%)”)

# Rotate x-axis labels for better readability

ax1.tick_params(axis=”x”, rotation=45)

# Remove unused subplot axes if the grid is larger than the number of variables

for j in range(i + 1, len(axes)):

fig.delaxes(axes[j])

# Add a global title for the full figure

fig.suptitle(

“Combined Bar Plots and Line Plots for Categorical Variables”,

fontsize=10,

x=0.0,

y=1.02,

ha=”left”

)

# Adjust layout to reduce overlapping elements

plt.tight_layout()

# Display the final figure

plt.show()

4.1 Example with person_income

Let us apply this procedure to the variable person_income.

The first step consists of performing an initial discretization using WoE. We decide to divide the variable into three classes and calculate the WoE of each class.

The results show that WoE is monotonic.

Borrowers with lower income, especially those with income below approximately 45,000, have a positive WoE. With our convention, this means that they have a higher concentration of defaults.

Borrowers with higher income, especially those with income above approximately 71,000, have the lowest WoE value. This indicates a lower concentration of defaults.

This result is coherent with credit risk intuition: higher income is generally associated with higher repayment capacity and therefore lower default risk.

We can then apply this segmentation to create a discretized variable called person_income_dis.

A binning is useful only if it remains stable.

A variable may show a good risk pattern in the training sample but become unstable over time.

This is why we also analyze the evolution of the default rate by category over time :

It is also useful to visualize, for each category:

- the population share;

- the default rate.

This can be done using a combined bar plot and line plot.

This chart is useful because it gives two pieces of information at the same time.

The bar plot tells us whether the category contains enough observations.

The line plot tells us whether the category has a coherent default rate.

A good final binning should have both a sufficient population size and a meaningful risk pattern.

The same cut-off points must then be applied to the test and out-of-time datasets.

This point is important.

The binning must be defined on the training sample and then applied unchanged to validation samples. Otherwise, we introduce data leakage and make the validation less reliable.

Conclusion

In this article, we studied why categorization is a key step in credit scoring model development.

Categorization applies to both categorical and continuous variables.

For categorical variables, it helps reduce the number of modalities and makes the model easier to estimate and interpret.

For continuous variables, it helps capture non-linear risk patterns, reduce the impact of outliers, handle missing values, improve stability, and prepare variables for Weight of Evidence transformation.

We also discussed several categorization methods, including equal-interval binning, equal-frequency binning, Chi-square-based grouping, and Weight of Evidence-based grouping.

In practice, categorization should not be treated as a mechanical preprocessing step. A good categorization must satisfy statistical, business, and stability requirements.

It should create classes that are sufficiently populated, clearly ordered in terms of risk, stable over time, and easy to explain.

This is especially important when the final model is a logistic regression scorecard. In that context, WoE-based categorization helps transform raw variables into stable risk classes that are naturally aligned with the log-odds structure of the model.

The main takeaway is this:

A credit scoring model is only as reliable as the variables that enter it.

If variables are noisy, unstable, poorly grouped, or difficult to interpret, even a good algorithm may produce a weak model.

But when variables are carefully categorized, the model becomes more robust, more interpretable, and easier to monitor in production.

What about you? In what situations do you categorize variables, for what reasons, and using which methods?