to transform a small text-only language model and gift it the power of vision. This article is to summarize all my learnings, and take a deeper look at the network architectures behind modern Vision Language Models.

The code is open-source, you can check out the GitHub link at the end of the article. There is also a 30-minute companion YouTube video that explains the whole article in a visually rich format.

Also, unless otherwise mentioned, all images in this article are produced by the author.

Wait, are you really going to be “training from scratch”?

Yes… I mean no… it’s a bit nuanced.

Research labs in 2026 do not train multimodal models from “scratch” anymore. It is simply too expensive to teach a model vision and (textual) language at the same time! It requires more data, compute, time, and money. Also, it often leads to poorer results.

Instead, labs take existing pretrained text-only language models, and finetune it to give “vision capabilities”. In theory (and practice), this is way more compute-efficient.

Let’s talk about Vision Language Models!

The standard architecture

Although it’s less data-intensive, finetuning text-only LMs to suddenly start seeing images would surely open its own can of worms.

- how do we embed the image, i.e. convert it into numerical representations that a neural network can understand?

- how do we tune the image embeddings to be compatible with text?

- how do we adjust the weights of the text model so that it retains it’s previous world knowledge, but also generate text from image embeddings?

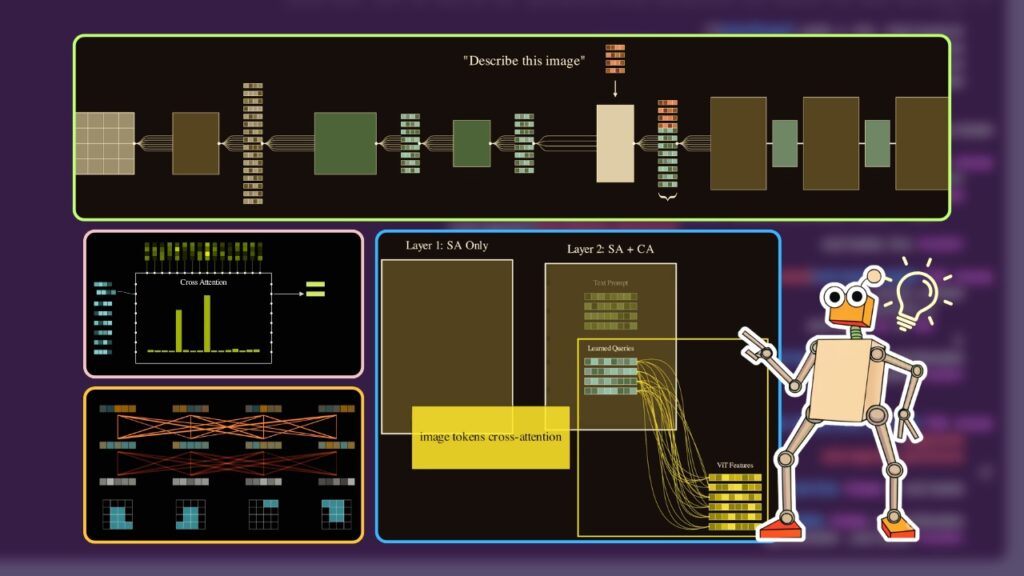

The image embeddings coming out of the VIT and Q-Former passes through an MLP layer. Followed by a series of Decoder layer and trainable LORA adapters.

These modules are:

- The Image Backbone: A model that converts raw images into embeddings.

- The Adapter Layer: These are models that convert the image embeddings into a “text-compatible” embedding. This is the main challenging part – what architectures to use, what loss functions, etc.

- The Language Layer: The language model we will train to input the adapted embeddings and generate text from it.

Let’s discuss them one by one.

1. The Image Backbone

The goal of your image backbone is simple:

Input: A raw 2D pixel map/image.

Output: A sequence of vector embeddings representing the image

In general, we just use an off-the-shelf image model that has been pretrained with massive corpus of images, generally on self-supervised tasks.

You can use a Convolutional Neural Network (like a ResNet) to use as an image backbone. But modern state-of-the-art VLMs have almost entirely shifted to ViTs because they scale better with data and are more flexible for multimodal fusion.

Vision Transformers input an image, extract patches out of it, and then pass them through bidirectional self-attention layers. This contextualizes each patch embedding with each other to form a contextual sequence of image embeddings.

Do I train the image backbone or keep it frozen?

In most VLM research, there is a clear trend toward keeping backbones static (frozen) to save costs. Also, vision-language training generally needs paired image-text datasets. Since these datasets are always much smaller than the VIT’s pretraining dataset, finetuning the backbone often leads to overfitting and degraded performance.

By keeping these weights frozen, we are basically transferring the ownership of vision-language learning to latter parts of the network (i.e. the adapter layer and the text backbone).

In my experiments, I used the ViT-Base model. This model takes the image input, splits it into patches of 16×16 images and applies self-attention on them to generate an embedding sequence of 197 vectors. Each vector is 768 dimesnions long (the embedding size of the VIT).

2. The Adapter Layer

This is where we are going to spend the majority of our time. We have converted images into embeddings already, but those embeddings are completely text-unaware.

Vision Transformers are pre-trained purely on image pixels. Not on their captions, or any local textual features. The role of the adapter is to ground the pixel-based-image-embeddings into a (often shorter sequence of) text-based-image-embeddings.

There are many ways to do this, like using CLIP models, but we are going to look at one of the more popular approaches — the Query Former of the Q-Former.

Q-Former

Alright — so what is a Q-Former? A Q-Former or the Query-Former was introduced in the BLIP-2 paper.

From the BLIP-2 Paper

How do I train a Q-Former?

Standard Q-Formers can be trained using any multimodal image-text pair dataset. For example, you can use the Conceptual Captions dataset, which is a massive corpus of images and their corresponding captions. In my project, I took just 50,000 pairs to train the Q-Former.

You can train a Q-Former from scratch, but the BLIP-2 recommendation is to use an pretrained BERT model. So that’s what we will do.

At a high level, here is our basic gameplan:

- Train a multi-modal joint embedding space. A space where text and images “know” each other.

- Basically, we will input pairs of image and captions — and embed both of them in the same joint space.

- Images and incompatible captions will be mapped in separate places in this new embedding space, and compatible captions will be mapped close to each other.

Layer 1: Self Attention

Layer 2: Self-Attention & Cross-Attention with the VIT Features

Setting up cross-attention layers

There’s a problem — BERT models are purely text models. They have no idea what an image is.

So, our objective is to first introduce new cross-attention layers to marry the vision embeddings coming out of the VIT and the text embeddings from BERT. Let’s break down step-by-step how we convert BERT into a Q-Former:

- Sample an image and text pair from the dataset

- Pass the image through the frozen VIT model to convert the image into image embeddings, shaped [197, 768]

- Initialize “learnable query embeddings”. These are (say 32) vector embeddings that we will use to convert the image embedding sequence into a text-grounded token embedding sequence. Notice that 32 is much lower than the original VIT embedding sequence length (197).

- We input the text caption embeddings and the query embeddings into the first BERT layer. The layer applies self-attention on these inputs.

For now, let’s assume that the query tokens only attend among themselves and the text tokens among themselves (i.e. the query tokens and the text tokens do not see each other).

- In the 2nd layer of the BERT, something INTERESTING happens. The two sets of embeddings go through another self-attention layer like before. But this time, we also use a cross-attention layer to contextualize the query embeddings with the ViT image embeddings we calculated earlier.

Of course, normal BERT does not have any cross-attention layers, so we introduce these multi-headed cross-attention layers ourselves.

- Just like this, we alternate between a pure self-attention layer (where queries and text independently self-attend among themselves) followed by a cross-attention layer (where the query embeddings attend to the frozen VIT embeddings).

- In the final layer, we choose a joint embedding training loss, like ITC (Image Text Contrastive Loss) or ITM (Image Text Matching Loss) or ITG (Image Text Generation Loss etc). More on this later.

What does cross-attention do?

It contextualizes the image content with the query embeddings. You can imagine each query is trying to match a specific embedding pattern against the 197 VIT embeddings.

For example, if a query has a high match with a single image vector, it will capture that feature very prominently. If the query matches with a combination of vectors, it will be an average of those embeddings, and so on.

Remember we trained 32 of these query embeddings, so you are allowing the Q-Former to learn multiple different co-activations within the image embeddings. Due to the nature of training, these coactivations are encouraged to maximize alignment between image and text.

As we train the Q-Former, both the initial query embeddings and the cross-attention weights will be optimized so we can extract relevant features from the VIT image tokens.

The query former embeddings are not trying to capture every detail of these 197 embeddings — instead they are trying to learn how to combine them into a compact 32 token sequence.

Note that after the Q-Former is trained, we won’t actually use the text part of the Q-Former for anything. We will simply pass the query-embeddings through the Q-former and alternatively run self-attention and cross-attention only on them.

Loss functions for training Q-Formers

There is SO MUCH COOL SHIT you can do just by configuring the attention mask in different ways.

So icydk, I will be training a small text-only LM to have vision capabilities. As a pretraining step, I need to first train a joint image-text embedding space which will be later used… https://t.co/Dxf2Q2hhBG pic.twitter.com/EFmWsWRbgA

— AVB (@neural_avb) December 16, 2025

How the Q-Former model is trained is actually closely related to how we attend between query and text tokens throughout the layers.

- Image-Text-Contrastive Loss (our setup)

For this task, we use a unimodal self-attention mask. Query tokens attend among each other, text token among each other.The loss function can be any standard CLIP-like contrastive loss function. We will align the image and text in the same embedding space. Basically, we take the output of the queries and the output of the text encoder and compute their similarity. If an image and a caption belong together, we want their vectors to be as close as possible.

This forces the queries to extract a “global” visual representation that matches the general theme of the text without actually looking at the words yet.

- Image-Text Matching Loss (ITM)

This uses a bi-directional self-attention mask. Here, every query token is allowed to see every text token, and every text token can see every query!For the loss fucntion, we use a binary classification task where the model has to predict: “Is this image and this text a match—Yes or No?”. Binary cross-entropy loss.

Because the modalities are fully mixed, the model can do fine-grained comparisons. The queries can look at specific objects in the image (via the cross-attention) and verify if they correspond to specific words in the text. This is much more detailed than the contrastive loss and ensures the 32 tokens are capturing localized details.

- Image-Text Generation Loss (ITG)

Finally, we have the generative task. For this, we use a multimodal causal mask. The queries can still see each other, but the text tokens are now treated like a sequence. Each text token can see all 32 query tokens – which act as a visual prefix. But they can only see the text tokens that came before it.For the loss function, we just train the model to predict the next token in the caption. By forcing the model to literally “write” the description based on the queries, we ensure that those 32 tokens contain every bit of visual information necessary for a language model to understand the scene.

For my project, I just used the simplest — ITC. For a small dataset like I was using, this was the easiest way! BLIP-2 recommends to use a mixture of all these training methods. The github repo shared at the end of the article provides the recipe to use any of the above attention schemes.

A trained Q-Former model learns to match similar text and image pairs

In the next section, we will do the final step — training the VLM!

3. The Language Layer

Now comes the final step. We will use the VIT and the Q-Former to make a language model into a vision model. I picked one of the smallest instruction-tuned language models — the SmolLM2-135M. Thankfully, this part is not as complicated as the Q-Former training.

We have the image embeddings (coming from the VIT and the Q-Former), and we have the text tokens (coming from the SmolLM tokenizer). Let’s see some details.

From the BLIP-2 Paper

- We sample an image and a caption from our dataset

- We randomly pick from a list of simple system prompts, similar to: “You are a helpful assistant. Respond truthfully to the user.”

We also pick the user query from a list of prompts, for example: “What do you see in this image?“

We tokenize the output captions sampled from the dataset as well.

These 3 things form the text tokens. We tokenize them all using the SmolLM2 tokenizer, but we are not going to insert it into the LLM just yet — we must process the image first.

- We pass the image through the frozen VIT, then through the Q-Former (again, note that the text captions are not passed into the Q-Former, only the image pipeline is executed)

- We introduce a small MLP layer that converts the Q-Former output into new embeddings that are of the same shape as the LLM’s expected embedding size. As we train, this MLP layer will map the Q-Former embedding into the LLM embedding space.

- Now that we have the text tokens sequence and the new image embeddings (VIT -> Q-Former -> MLP). We will pass the text tokens through the LLM’s native embedding layer. We sandwich the text and image embeddings in the following sequence:

…and forward pass it through the rest of the LLM.

- Why that specific sequence? Since autoregressive LLMs use causal masking, we will essentially be training models to generate the output (caption) sequence given the entire prefix (system prompt, user prompt, and the image embeddings).

- We add LoRA adapters (Low-Rank Adaptation Matrices) instead of training the entire LLM from scratch. By wrapping our LLM with LoRA, we freeze the original millions of parameters and only train tiny, low-rank matrices injected into the attention layers. This makes the model trainable on consumer hardware while keeping all that pre-existing intelligence intact.

- And that’s it! We pass these stitched embeddings and labels into the LLM. The model attends to the text instruction and the visual tokens simultaneously, and thanks to LoRA, it learns how to update its internal wiring to understand this new visual language. Only the Q-Former layers, the MLP layer, and the LORA adapters are trained. Everything else is kept frozen.

Some results! You can find more results in the youtube video mentioned at the end of the article!

After training this for just a few hours on a small subset of data, the trained VLM can now see the images and generate text about it. Machine Learning is so beautiful when it works.

In summary

You can find the full github repository here:

https://github.com/avbiswas/vlm

And watch the youtube video here:

Let’s summarize all the modules in Vision Language pipelines.

- A vision backbone (like the VIT) that takes image input and converts it into embeddings

- An adapter layer (like the Q-Former) that grounds the image with text

- An LLM that we train to consolidate the text and image embeddings to learn the language of vision

My Patreon:

https://www.patreon.com/NeuralBreakdownwithAVB

My YouTube channel:

https://www.youtube.com/@avb_fj

Follow me on Twitter:

https://x.com/neural_avb

I am building Paper Breakdown, a place to study research papers

https://paperbreakdown.com

Read my articles:

https://towardsdatascience.com/author/neural-avb/