an article about overengineering a RAG system, adding fancy things like query optimization, detailed chunking with neighbors and keys, along with expanding the context.

The argument against this kind of work is that for some of these add-ons, you still end up paying 40–50% more in latency and cost.

So, I decided to test both pipelines, one with query optimisation and neighbor expansion, and one without.

The first test I ran used simple corpus questions generated directly from the docs, and the results were lackluster. But then I continued testing it on messier questions and on random real-world questions, and it showed something different.

This is what we’ll talk about here: where features like neighbor expansion can do well, and where the cost may not be worth it.

We’ll go through the setup, the experiment design, three different evaluation runs with different datasets, how to understand the results, and the cost/benefit tradeoff.

Please note that this experiment is using reference-free metrics and LLM judges, which you always have to be cautious about. You can see the entire breakdown in this excel document.

If you feel confused at any time, there are two articles, here and here, that came before this one, though this one should stand on its own.

The intro

People continue to add complexity to their RAG pipelines, and there is a reason for it. The overall design is flawed, so we keep patching on fixes to make something that is more robust.

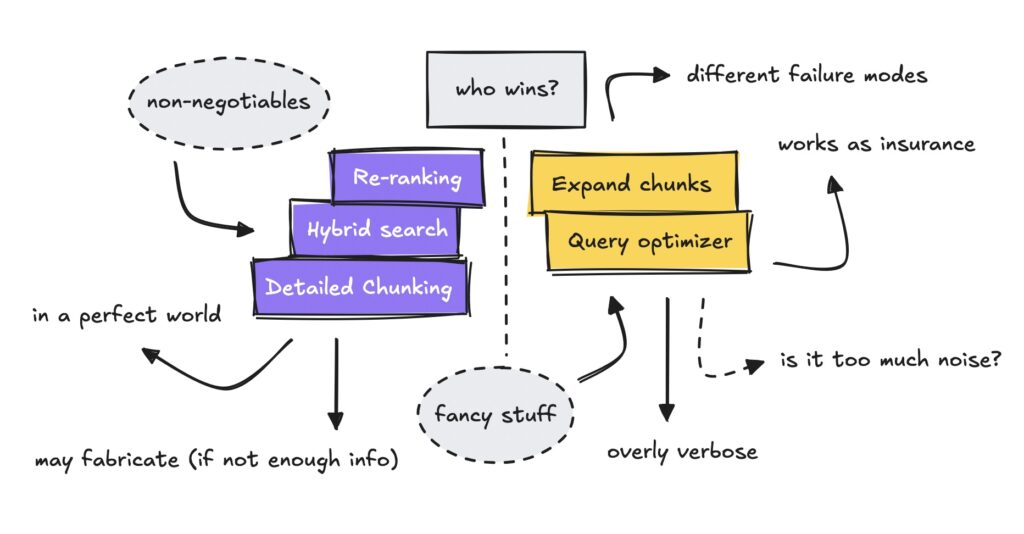

Most people have introduced hybrid search, BM25 and semantic, along with re-rankers in their RAG setups. This has become standard practice. But there are more complex features you can add.

The pipeline we’re testing here introduces two additional features, query optimization and neighbor expansion, and tests their efficiency.

We’re using LLM judges and different datasets to evaluate automated metrics like faithfulness, along with A/B tests on quality, to see how the metrics move and change for each.

The introduction will walk through the setup and the experiment design.

The setup

Let’s first run through the setup, briefly covering detailed chunking and neighbor expansion, and what I define as complex versus naive for the purpose of this article.

The pipeline I’ve run here uses very detailed chunking methods, if you’ve read my previous article.

This means parsing the PDFs correctly, respecting document structure, using smart merging logic, intelligent boundary detection, numeric fragment handling, and document-level context (i.e. applying headings for each chunk).

I decided not to budge on this part, though this is obviously the hardest part of building a retrieval pipeline.

When processing, it also splits the sections and then references the chunk neighbors in the metadata. This allows us to expand the content so the LLM can see where it comes from.

For this test, we use the same chunks, but we remove query optimization and context expansion for the naive pipeline to see if the fancy add-ons are actually doing any good.

I should also mention that the use case for this was scientific RAG papers. This is a semi-difficult case, and as such, for easier use cases this may not apply (but we’ll get to that later too).

You can see the papers this pipeline has ingested here, and read about the use case here.

To conclude: the setup uses the same chunking, the same reranker, and the same LLM. The only difference is optimizing the queries and expanding the chunks to neighbors.

The experiment design

We have three different datasets that were run through several automated metrics, then through a head-to-head judge, along with examining the outputs to validate and understand the results.

I started by creating a dataset with 256 questions generated from the corpus. This means questions such as “What is the main purpose of the Step-Audio 2 model?”

This can be a good way to validate that your pipeline works, but if it’s too clean it can give you a false sense of security.

Note that I did not specify how the questions should be generated. This means I did not ask it to generate questions that an entire section could answer, or that only a single chunk could answer.

The second dataset was also generated from the corpus, but I intentionally asked the LLM to generate messy questions like “what are the plz three types of reward functions used in eviomni?”

The third dataset, and the most important one, was the random dataset.

I asked an AI agent to research different RAG questions people had online, such as “best rag eval benchmarks and why?” and “when does using titles/abstracts beat full text retrieval.”

Remember, the pipeline had only ingested around 150 scientific papers from September/October that mentioned RAG. So we don’t know if the corpus even has the answers.

To run the first evals, I used automated metrics such as faithfulness (does the answer stay grounded in the context) and answer relevancy (does it answer the query) from RAGAS. I also added a few metrics from DeepEval to look at context relevance, structure, and hallucinations.

If you want to get an overview of these metrics, see my previous article.

We ran both pipelines through the different datasets, and then through all of these metrics.

Then I added another head-to-head judge to A/B test the quality of each pipeline for each dataset. This judge did not see the context, only the question, the answer, and the automated metrics.

Why not include the context in the evaluation? Because you can’t overload these judges with too many variables. This is also why evals can feel difficult. You need to understand the one key metric you want to measure for each one.

I should note that this can be an unreliable way to test systems. If the hallucination score is mostly vibes, but we remove a data point because of it before sending it into the next judge that tests quality, we can end up with highly unreliable data once we start aggregating.

For the final part, we looked at semantic similarity between the answers and examined the ones with the largest differences, along with cases where one pipeline clearly won over the other.

Let’s now turn to running the experiment.

Running the experiment

Since we have several different datasets, we need to go through the results of each. The first two datasets proved pretty lackluster, but they did show us something, so it’s worth covering them.

The random dataset showed the most interesting results so far. This will be the main focus, and I’ll dig into the results a bit to show where it failed and where it succeeded.

Remember, I’m trying to reflect reality here, which is often a lot messier than people want it to be.

Clean questions from the corpus

The clean corpus showed pretty identical results on all metrics. The judge seemed to prefer one over the other based on shallow preferences, but it showed us the issue with relying on synthetic datasets.

The first run was on the corpus dataset. Remember the clean questions that had been generated from the docs the pipeline had ingested.

The results of the automated metrics were eerily similar.

Even context relevance was pretty much the same, ~0.95 for both. I had to double check it several times to confirm. As I’ve had great success with using context expansion, the results made me a bit uneasy.

It’s quite obvious though in hindsight, the questions are already well formatted for retrieval, and one passage may answer the question.

I did have the thought on why context relevance didn’t decrease for the expanded pipeline if one passage was good enough. This was because the extra contexts come from the same section as the seed chunks, making them semantically related and not considered “irrelevant” by RAGAS.

The A/B test for quality we ran it through had similar results. Both won for the same reasons: completeness, accuracy, clarity.

For the cases where naive won, the judge liked the answer’s conciseness, clarity, and focus. It penalized the complex pipeline for more peripheral details (edge cases, extra citations) that weren’t directly asked for.

When complex won, it liked the completeness/comprehensiveness of the answer over the naive one. This meant having specific numbers/metrics, step-by-step mechanisms, and “why” explanations, not just “what.”

Nevertheless, these results didn’t point to any failures. This was more a preference thing rather than about pure quality differences, both did exceptionally well.

So what did we learn from this? In a perfect world, you don’t need any fancy RAG add-ons, and using a test set from the corpus is highly unreliable.

Messy questions from the corpus

Next up we tested the second dataset, which showed similar results as the first one since it had been synthetically generated, but it started moving in another direction, which was interesting.

Remember that I introduced the messier questions generated from the corpus earlier. This dataset was generated the same way as the first one, but with messy phrasing (“can u explain like how plz…”).

The results from the automated metrics showed that the results were still very similar, though context relevance started to drop in the complex one while faithfulness started to rise slightly.

For the ones that failed the metrics, there were a few RAGAS false positives.

But there were also some failures for questions that had been formatted without specificity in the synthetic dataset, such as “how many posts tbh were used for dataset?” or “how many datasets did they test on?”

There were some questions that the query optimizer helped by removing noisy input. But I realized too late that the questions that had been generated were too directed at specific passages.

This meant that pushing them in as they were did well on the retrieval side. I.e., questions with specific names in them (like “how does CLAUSE compare…”) matched documents fine, and the query optimizer just made things worse.

There were times when the query optimization failed completely because of how the questions had been phrased.

Such as the question: “how does the btw pre-check phase in ac-rag work & why is it important?” where direct search found the AC-RAG paper directly, as the question had been generated from there.

Running it through the A/B judge, the results favored the advanced pipeline a lot more than they had for the first corpus.

The judge favored naive’s conciseness and brevity, while it favored the complex pipeline for completeness and comprehensiveness.

The reason we see the increase in wins for the complex pipeline is that the judge increasingly chose “complete but verbose” over “brief but potentially missing aspects” this time around.

This is when I had the thought how useless answer quality is as a metric. These LLM judges run on vibes sometimes.

In this run, I didn’t think the answers were different enough to warrant the difference in results. So remember, using a synthetic dataset like this can give you some intel, but it can be quite unreliable.

Random questions dataset

Lastly, we’ll go through the results from the random dataset, which showed a lot more interesting results. Metrics started to move with a higher margin here, which gave us something to dig into.

Up to this point I had nothing to show for this, but this last dataset finally gave me something interesting to dig into.

See the results from the random dataset below.

On random questions, we actually saw a drop in faithfulness and answer relevancy for the naive baseline. Context relevance was still higher, along with structure, but this we had already established for the complex pipeline in the previous article.

Noise will inevitably happen for the complex one, as we’re talking about 10x more chunks. Citation structure may be harder for the model when the context increases (or the judge has trouble judging the full context).

The A/B judge, though, gave it a very high score compared to the other datasets.

I ran it twice to check, and each time it favored the complex one over the naive one by a huge margin.

Why the change? This time there were a lot of questions that one passage couldn’t answer on its own.

Specifically, the complex pipeline did well on tradeoff and comparison questions. The judge reasoned “more complete/comprehensive” compared to the naive pipeline.

An example was the question “what are pros and cons of hybrid vs knowledge-graph RAG for vague queries?” Naive had many unsupported claims (missing GraphRAG, HybridRAG, EM/F1 metrics).

At this point, I needed to understand why it won and why naive lost. This would give me intel on where the fancy features were actually helping.

Looking into the results

Now, without digging into the results, you can’t fully know why something is winning. Since the random dataset showed the most interesting results, this is where I decided to put my focus.

First, the judge has real issues evaluating the fuller context. This is why I could never create a judge to evaluate each context against the other. It would prefer naive because it’s cognitively easier to judge. This is what made this so hard.

Nevertheless, we can pinpoint some of the real failures.

Even though the hallucination metric showed decent results, when digging into it, we could see that the naive pipeline fabricated information more often.

We could locate this by looking at the low faithfulness scores.

To give you an example, for the question “how do I test prompt injection risks if the bad text is inside retrieved PDFs?” the naive pipeline filled in gaps in the context to provide the answer.

Question: How do I test prompt injection risks if the bad text is inside retrieved PDFs?

Naive Response: Lists standard prompt-injection testing steps (PoisonedRAG, adaptive instructions, multihop poisoning) but synthesizes a generic evaluation recipe that is not fully supported by the specific retrieved sections and implicitly fills gaps with prior knowledge.

Complex Response: Derives testing steps directly from the retrieved experiment sections and threat models, including multihop-triggered attacks, single-text generation bias measurement, adaptive prompt attacks, and success-rate reporting, staying within what the cited papers actually describe.

Faithfulness: Naive: 0.0 | Complex: 0.83

What Happened: Unlike the naive answer, it is not inventing attacks, metrics, or techniques out of thin air. PoisonedRAG, trigger-based attacks, Hotflip-style perturbations, multihop attacks, ASR, DACC/FPR/FNR, PC1–PC3 all appear in the provided documents. However, the complex pipeline is subtly overstepping and has a case of scope inflation.

The expanded content added the missing evaluation metrics, which bumped up the faithfulness score by 87%.

Nevertheless, the complex pipeline was subtly overstepping and had a case of scope inflation. This could be an issue with the LLM generator, where we need to tune it to make sure that each claim is explicitly tied to a paper and to mark cross-paper synthesis as such.

For the question “how do I benchmark prompts that force the model to list contradictions explicitly?” naive again has very few metrics and thus invents metrics, reverses findings, and collapses task boundaries.

Question: How do I benchmark prompts that force the model to list contradictions explicitly?

Naive Response: Mentions MAGIC by name and vaguely gestures at “conflicts” and “benchmarking,” but lacks concrete mechanics. No clear description of conflict generation, no separation of detection vs localization, no actual evaluation protocol. It fills gaps by inventing generic-sounding steps that are not grounded in the provided contexts.

Complex Response: Explicitly aligns with the MAGIC paper’s methodology. Describes KG-based conflict generation, single-hop vs multi-hop and 1 vs N conflicts, subgraph-level few-shot prompting, stepwise prompting (detect then localize), and the actual ID/LOC metrics used across multiple runs. Also correctly incorporates PC1–PC3 as auxiliary prompt components and explains their role, consistent with the cited sections.

Faithfulness: Naive: 0.35 | Complex: 0.73

What Happened: The complex pipeline has far more surface area, but most of it is anchored to actual sections of the MAGIC paper and related prompt-component work. In short: the naive answer hallucinates by necessity due to missing context, while the complex answer is verbose but materially supported. It over-synthesizes and over-prescribes, but mostly stays within the factual envelope. The higher faithfulness score is doing its job, even if it offends human patience.

For complex, though, it over-synthesizes and over-prescribes, but stays within the factual information.

This pattern shows up in multiple examples. The naive pipeline lacks enough information for some of these questions, so it falls back to prior knowledge and pattern completion. Whereas the complex pipeline over-synthesizes under false coherence.

Essentially, naive fails by making things up, and complex fails by saying true things too broadly.

This test was more about figuring out if these fancy features help, but it did point to us needing to work on claim scoping: forcing the model to say “Paper A shows X; Paper B shows Y,” and so on.

You can dig into a few of these questions in the sheet here.

Before we move on to the cost/latency analysis, we can try to isolate the query optimizer as well.

How much did the query optimizer help?

Since I did not test each part of the pipeline for each run, we had to look at different things to estimate whether the query optimizer was helping or hurting.

First, we looked at the seed chunk overlap for the complex vs naive pipeline, which showed 8.3% semantic overlap in the random pipeline, versus more than 50% overlap for the corpus pipeline.

We already know that the full pipeline won on the random dataset, and now we could also see that it surfaced different documents because of the query optimizer.

Most documents were different, so I couldn’t isolate whether the quality degraded when there was little overlap.

We also asked a judge to estimate the quality of the optimized queries compared to the original ones, in terms of preserving intent and being diverse enough, and it won with an 8% margin.

A question that it excelled on was “why is everyone saying RAG doesn’t scale? how are people fixing that?”

Orginal: why is everyone saying RAG doesn’t scale? how are people fixing that?

Optimized (1): RAG scalability challenges (hybrid)

Optimized (2): Solutions for RAG scalability (hybrid)

Whereas a question that naive did well on its own was “what retrieval settings help reduce needle-in-a-haystack,” and other questions that were very well formatted from the start.

We could reasonably deduce, though, that multi-questions and messier questions did better with the optimizer, as long as they were not domain specific. The optimizer was overkill for well formatted questions.

It also did badly when the question would already be understood by the underlying documents, in cases where someone asks something domain specific that the query optimizer won’t understand.

You can look through a few examples in the Excel document.

This teaches us how important it is to make sure that the optimizer is tuned well to the questions that users will ask. If your users keep asking with domain specific jargon that the optimizer is ignoring or filtering out, it won’t perform well.

We can see here that it is rescuing some questions and failing others at the same time, so it would need work for this use case.

Let’s discuss it

I’ve overloaded you with a lot of data, so now it’s time to go through the cost/latency tradeoff, discuss what we can and cannot conclude, and the limitations of this experiment.

The cost/latency tradeoff

When looking at the cost and latency tradeoffs, the goal here is to put concrete numbers on what these features cost and where it actually comes from.

The cost of running this pipeline is very slim. We’re talking $0.00396 per run, and this does not include caching. Removing the query optimizer and neighbor expansion decreases costs by 41%.

It’s not more than that because token inputs, the thing that increases with added context, are quite cheap.

What actually costs money in this pipeline is the re-ranker from Cohere, which both the naive and the full pipeline use.

For the naive pipeline, the re-ranker accounts for 70% of the entire cost. So it’s worth looking at every part of the pipeline to figure out where you might implement smaller models to cut costs.

Nevertheless, at around 100k questions, you would be paying $400.00 for the full pipeline and $280.00 for the naive one.

There is also the case for latency.

We measured a +49% increase in latency with the complex pipeline, which amounts to about 6 seconds, mostly driven by the query optimizer using GPT-5-mini. It’s possible to use a faster and smaller model here.

For neighbor expansion, we measured the average increase to be 2–3 seconds longer. Do note that this doesn’t scale linearly.

4.4x more input tokens only added 24% more time.

You can see the entire breakdown in the sheet here.

What this shows is that the cost difference is real but not extreme, while the latency difference is much more noticeable. Most of the money is still spent on re-ranking, not on adding context.

What we can conclude

Let’s focus on what worked, what failed, and why. We see that neighbor expansion may pull it’s weight when questions are diffuse, but each pipeline has it’s own failure modes.

The clearest finding from this experiment is that neighbor expansion earns its keep when retrieval gets hard and one chunk can’t answer the question.

We did a test in the previous article that looked at how much of the answer was generated from the expanded chunks, and on clean corpus questions, only 22% of the answer content came from expanded neighbors. We also saw that the A/B results here in this article showed a tie.

On messy questions, this rose to 30% with a 10-point margin for the A/B test. On random questions, it hit 41% (used from the context) with a 44-point margin for the A/B test. This pattern is undeniable.

What’s happening underneath is a difference in failure modes. When naive fails, it fails by omission. The LLM doesn’t have enough context, so it either gives an incomplete answer or fabricates information to fill the gaps.

We saw this clearly in the prompt injection example, where naive scored 0.0 on faithfulness because overreached on the facts.

When complex fails, it fails by inflation. It has so much context that the LLM over-synthesizes and makes claims broader than any single source supports. But at least those claims are grounded in something.

The faithfulness scores reflect this asymmetry. Naive bottoms out at 0.0 or 0.35, while complex’s worst cases still land around 0.73.

The query optimizer is harder to call. It helped on 38% of questions, hurt on 27%, and made no difference on 35%. The wins were dramatic when they happened, rescuing questions like “why is everyone saying RAG doesn’t scale?” where direct search returned nothing.

But the losses were also not great, such as when the user’s phrasing already matched the corpus vocabulary and the optimizer introduced drift.

This probably suggests you’d want to tune the optimizer carefully to your users, or find a way to detect when reformulation is likely to help versus hurt.

On cost and latency, the numbers weren’t where I expected. Adding 10x more chunks only increased generation time by 24% because reading tokens is a lot cheaper.

The real cost driver is the reranker, at 70% of the naive pipeline’s total.

The query optimizer contributes the most latency, at nearly 3 seconds per question. If you’re optimizing for speed, that’s where to look first, along with the re-ranker.

So more context doesn’t necessarily mean chaos, but it does mean you need to control the LLM to a larger degree. When the question doesn’t need the complexity, the naive pipeline will rule, but once questions become diffuse, the more complex pipeline may start to pull its weight.

Let’s talk limitations

I have to cover the main limitations of the experiment and what we should be careful about when interpreting the results.

The obvious one is that LLM judges run on vibes.

The metrics moved in the right direction across datasets, but I wouldn’t trust the absolute numbers enough to set production thresholds on them.

The messy corpus showed a 10-point margin for complex, but honestly the answers weren’t different enough to warrant that gap. It it could be noise.

I also didn’t isolate what happens when the docs genuinely can’t answer the question.

The random dataset included questions where we didn’t know if the papers had relevant content, but I treated all 66 the same. I did though hunt through the examples, but it’s still possible some of the complex pipeline’s wins came from being better at admitting ignorance rather than better at finding information.

Finally, I tested two features together, query optimization and neighbor expansion, without fully isolating each one’s contribution. The seed overlap analysis gave us some signal on the optimizer, but a cleaner experiment would test them independently.

For now, we know the combination helps on hard questions and that the cost is 41% more per query. Whether that tradeoff makes sense depends entirely on what your users are actually asking.

Notes

I think we can conclude from this article that doing evals is hard, and it’s even harder to put an experiment like this on paper.

I wish I could give you a clean answer, but it’s complicated.

I would personally say though that fabrication is worse than being overly verbose. But still if your corpus is incredibly clean and each answer usually points to a specific chunk, the neighbor expansion is overkill.

This then just tells you that these fancy features are a kind of insurance.

Nevertheless I hope it was informational, let me know what you thought by connecting with me at LinkedIn, Medium or via my website.

❤

Remember you can see the full breakdown and numbers here.