Inspired by the ICLR 2026 blogpost/article, The 99% Success Paradox: When Near-Perfect Retrieval Equals Random Selection

an Edinburgh-trained PhD in Information Retrieval from Victor Lavrenko’s Multimedia Information Retrieval Lab at Edinburgh, where I trained in the late 2000s, I have long viewed retrieval through the framework of traditional IR thinking:

- Did we retrieve at least one relevant chunk?

- Did recall go up?

- Did the ranker improve?

- Did downstream answer quality look acceptable on a benchmark?

Those are still useful questions. But after reading the recent work on Bits over Random (BoR), I think they are incomplete for the Agentic systems many of us are now actually building.

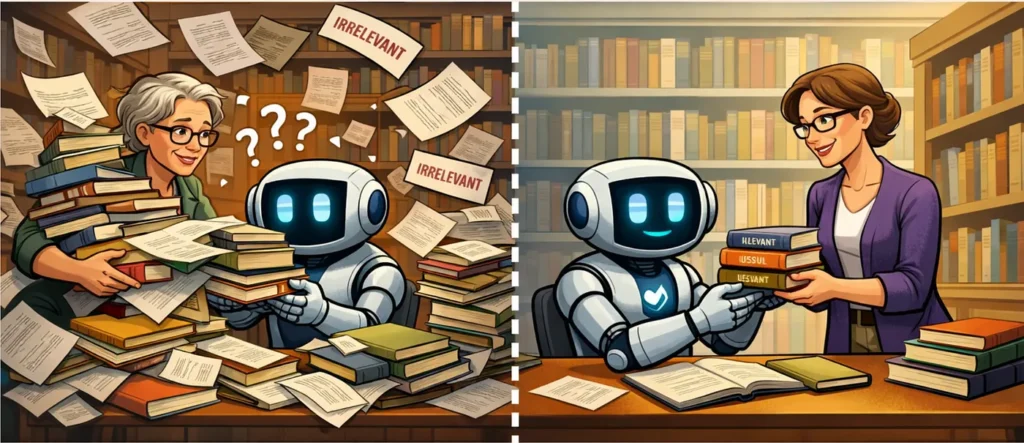

Figure 1: In LLM systems, retrieval quality is not just about finding relevant information, but about how much irrelevant material comes with it. The librarian analogy illustrates the core idea behind Bits Over Random (BoR): one system floods the context window with noisy, low-selectivity retrieval, while the other delivers a smaller, cleaner, more selective bundle that is easier for the model to use. 📖 Source: image by author via GPT-5.4.

The ICLR blogpost sharpened something I had felt for a while in production LLM systems: retrieval quality should take into account both how much good content we find and also how much irrelevant material we bring along with it. In other words, as we crank up our recall we also increase the risk of context pollution.

What makes BoR useful is that it gives us a language for this. BoR tells us whether retrieval is genuinely selective, or whether we are achieving success mostly by stuffing the context window with more material. When BoR falls, it is a sign that the retrieved bundle is becoming less discriminative relative to chance. In practice, that often correlates with the model being forced to read more junk, more overlap, or more weakly relevant material.

The important nuance is that BoR does not directly measure what the model “feels” when reading a prompt. It measures retrieval selectivity relative to random chance. But lower selectivity often goes hand in hand with more irrelevant context, more prompt pollution, more attention dilution, and worse downstream performance. Put simply, BoR helps tell us when retrieval is still selective and when it has started to degenerate into context stuffing.

That idea matters much more for RAG and agents than it did for classic search.

Why retrieval dashboards can mislead agent teams

One of the easiest traps in RAG is to look at your retrieval dashboard, see healthy metrics, and conclude that the system is doing well. You might see:

- high Success@K,

- strong recall,

- a good ranking metric,

- and a larger K seeming to improve coverage.

On paper things may look better but, in reality, the agent might actually behave worse. Your agent may have any number of maladies such as diffuse answers to queries, unreliable tool use or simply a rise in latency and token cost without any real user benefit.

This disconnect happens because most retrieval dashboards still reflect a human search worldview. They assume the consumer of the retrieved set can skim, filter, and ignore junk. Humans are surprisingly good at this. LLMs are not consistently good at it.

An LLM does not “notice” ten retrieved items and casually focus on the best two in the way a strong analyst would. It processes the full bundle as prompt context. That means the retrieval layer is surfacing evidence that is actively shaping the model’s working memory.

This is why I think agent teams should stop treating retrieval as a back-office ranking problem and start treating it as a reasoning-budget allocation problem. When building performant agentic systems, the key question is both:

- Did we retrieve something relevant?

and:

- How much noise did we force the model to process in order to get that relevance?

That is the lens BoR pushes you toward, and I have found it to be a very useful one.

Context engineering is becoming a first-class discipline

One reason this paper has resonated with me is that it fits a broader shift already happening in practice. Software engineers and ML practitioners working on LLM systems are gradually becoming something closer to context engineers.

That means designing systems that decide:

- what should enter the prompt,

- when it should enter,

- in what form,

- with what granularity,

- and what should be excluded entirely.

In traditional software, we worry about memory, compute, and API boundaries. In LLM systems, we also need to worry about context purity. The context window is contested cognitive real estate.

Every irrelevant passage, duplicated chunk, weakly related example, verbose tool definition, and poorly timed retrieval result competes with the thing the model most needs to focus on. That is why I like the pollution metaphor. Irrelevant context contaminates the model’s workspace.

The BoR poster gives this intuition a more rigorous shape by telling us that we should stop evaluating retrieval only by whether it succeeds. We should also ask how much better the retrieval is compared to chance, at the depth (top K retrieved items) that we are actually using. That is a very practitioner-friendly question.

Why tool overload breaks agents

This is where I think the BoR work becomes especially important for real-world agent systems.

In classic RAG, the corpus is often large. You may be retrieving from tens of thousands or millions of chunks. In that regime, random chance remains weak for longer. Tool selection is very different.

In an agent, the model may be choosing among 20, 50, or 100 tools. That sounds manageable until you realize that several tools are often vaguely plausible for the same task. Once that happens, dumping all tools into context is not thoroughness. It is confusion disguised as completeness.

I have seen this pattern repeatedly in agent design:

- the team adds more tools,

- descriptions become longer,

- overlap between tools increases,

- the agent starts making brittle or inconsistent choices,

- and the first instinct is to tune the prompt harder.

But often the real issue is architectural, not prompt-level. The model is being asked to choose from an overloaded context where distinctions are too weak and too numerous.

What BoR adds here is a useful way to formalize something people often feel only intuitively: there is a point where the selection task becomes so crowded that the model is no longer demonstrating meaningful selectivity.

That is why I strongly prefer agent designs with:

- Staged tool retrieval: narrowing the search in steps, first finding a small set of plausible tools, then making the final choice from that shortlist rather than from the full library at once.

- Domain routing: before final tool choice means first deciding which broad area the task belongs to, such as search, CRM, finance, or coding, and only then selecting a specific tool within that domain.

- Compressed capability summaries: presenting each tool with a short, high-signal description of what it is for, when it should be used, and how it differs from nearby tools, instead of dumping long verbose specs into the prompt.

- Explicit exclusion of irrelevant tools: deliberately removing tools that are not appropriate for the current task so the model is not distracted by plausible but unnecessary options.

In my experience tool choice should be treated more like retrieval than like static prompt decoration.

Understanding BoR through tool selection

One of the most useful things about BoR is that it sharpens what top-K really means in tool-using agents.

In document retrieval, increasing top-K often means moving from top-5 passages to top-20 or top-50 from a very large corpus. In tool selection, the same move has a very different character. When an agent only has a modest tool library, increasing top-K may mean moving from a shortlist of 3 candidate tools, to 5, to 8, and eventually to the familiar but dangerous fallback: just give it all 15 tools to be safe.

That often improves recall or Success@K, because the correct tool is more likely to be somewhere in the visible set. But that improvement can be misleading. As K grows, you are not only helping the router. You are also making it easier for a random selector to include a relevant tool.

So the real question is not simply: Did top-8 contain a useful tool more often than top-3? The more important question is: Did top-8 improve meaningful selectivity, or did it mostly make the task easier through brute-force inclusion?That is exactly where BoR becomes useful.

A simple example makes the intuition clearer. Suppose you have 10 tools, and for a given class of task 2 of them are genuinely relevant. If you show the model only 1 tool, random chance of surfacing a relevant one is 20 percent. At 3 tools, the random baseline rises sharply. At 5 tools, random inclusion is already fairly strong. At 10 tools, it is 100 percent, because you have shown everything. So yes, Success@K rises as K rises. But the meaning of that success changes. At low K, success indicates real discrimination. At high K, success may simply mean you included enough of the menu that failure became difficult.

That is what I mean by helping random chance rather than meaningful selectivity.

This matters because, with tools, the problem is worse than a misleading metric. When you show too many tools, the prompt gets longer, descriptions begin to overlap, the model sees more near-matches, distinctions become fuzzier, parameter confusion rises, and the chance of choosing a plausible-but-wrong tool increases. So even though top-K recall improves, the quality of the final decision may get worse. This is the small-tool paradox: adding more candidate tools can increase apparent coverage while decreasing the agent’s ability to choose cleanly.

A practical way to think about this is that tool selection often falls into three regimes. In the healthy regime, K is small relative to the number of tools, and the appearance of a relevant tool in the shortlist tells you the router actually did something useful. For example, 30 total tools, 2 or 3 relevant, and a shortlist of 3 or 4 still feels like genuine selection. In the grey zone, K is large enough that recall improves, but random inclusion is also rising quickly. For example, 20 tools, 3 relevant, shortlist of 8. Here you may still gain something, but you should already be asking whether you are truly routing or merely widening the funnel. Finally, there is the collapse regime, where K is so large that success mostly comes from exposing enough of the tool menu that random selection would also succeed often. If you have 15 tools, 3 relevant ones, and a shortlist of 12 or all 15, then “high recall” is no longer saying much. You are getting close to brute-force exposure.

Operationally, this pushes me toward a better question. In a small-tool system, I recommend avoiding the overexposure mindset that asks:

- How large must K be before recall looks good?

The better question is:

- How small can my shortlist be while still preserving strong task performance?

That mindset encourages disciplined routing.

In practice, that usually means routing first and choosing second, keeping the shortlist very small, compressing tool descriptions so distinctions are obvious, splitting tools into domains before final selection, and testing whether increasing K improves end-to-end task accuracy, not just tool recall. A useful sanity check is this: if giving the model all tools performs about the same as your routed shortlist, then your routing layer may not be adding much value. And if giving the model more tools improves recall but worsens overall task performance, you are likely in exactly the regime where K is helping random chance more than real selectivity.

When the failure mode changes: large tool libraries

The large-tool case is different, and this is where an important nuance matters. A larger tool universe does not mean we should dump hundreds of tools into context and expect the system to work better. It just means the failure mode changes.

If an agent has 1,000 tools available and only a handful are relevant, then increasing top-K from 10 to 50 or even 100 may still represent meaningful selectivity. Random chance remains weaker for longer than it does in the small-tool case. In that sense, BoR is still useful: it helps stop us from mistaking broader exposure for better routing. It asks whether a larger shortlist reflects genuine selectivity, or whether it is merely helping by exposing a larger slice of the search space.

But BoR does not capture the whole problem here. With very large tool libraries, the issue may no longer be that random chance has become too strong. The issue may be that the model is simply drowning in options. A shortlist of 200 tools can still be better than random in BoR terms and yet still be a terrible prompt. Tool descriptions overlap, near-matches proliferate, distinctions become harder to maintain, and the model is forced to reason over a crowded semantic menu.

So BoR is valuable, but it is not sufficient on its own. It is better at telling us whether a shortlist is genuinely discriminative relative to chance than whether that shortlist is still cognitively manageable for the model. In large tool libraries, we therefore need both perspectives: BoR to measure selectivity, and downstream measures such as tool-choice quality, latency, parameter correctness, and end-to-end task success to measure usability.

BoR tells us whether retrieval is genuinely selective, or whether we are achieving success mostly by stuffing the context window with more material. When BoR falls, it is a sign that the retrieved bundle is becoming less discriminative relative to chance. In practice, that often correlates with the model being forced to read more junk, more overlap, or more weakly relevant material. The nuance is that BoR does not directly measure what the model “feels” when reading a prompt. It measures selectivity relative to random chance. But low BoR is often a warning sign that the model is being asked to process an increasingly noisy context window.

The design implication is the same even though the reason differs. With small tool sets, broad exposure quickly becomes bad because it helps random chance too much. With very large tool sets, broad exposure becomes bad because it overwhelms the model. In both cases, the answer is not to stuff more into context. It is to design better routing.

My own rule of thumb: the model should see less, but cleaner

If I had to summarize the practical shift in one sentence, it would be this: for LLM systems, smaller and cleaner is often better than larger and more comprehensive.

That sounds obvious, but many systems are still designed as if “more context” is automatically safer. In reality, once a baseline level of useful evidence is present, additional retrieval can become harmful. It increases token cost and latency, but more importantly it widens the field of competing cues inside the prompt.

I have come to think about prompt construction in three layers:

Layer 1: mandatory task context

- The core instruction, constraints, and immediate user objective.

Layer 2: highly selective grounding

- Only the minimum supporting evidence or tool definitions needed for the next reasoning step.

Layer 3: optional overflow

- Material that is merely plausible, loosely related, or included “just in case.”

Most failures come from letting Layer 3 invade Layer 2. That is why retrieval should be judged not just by coverage, but by its ability to preserve a clean Layer 2.

Where I think BoR is especially useful

I do not see BoR as a replacement for all retrieval metrics. I see it as a very useful additional lens, especially in these cases:

1. Choosing K in production

- Many teams still increase top-K until recall looks good enough. BoR encourages a more disciplined question: at what point is increasing K mostly helping random chance rather than meaningful selectivity?

2. Evaluating agent tool routing

- This may be the most compelling use-case. Agents often fail not because no good tool exists, but because too many nearly relevant tools are presented simultaneously.

3. Diagnosing why downstream quality falls despite “better retrieval”

- This is the classic paradox. Coverage goes up. Final answer quality goes down. BoR helps explain why.

4. Comparing systems with different retrieval depths

- Raw success rates can be deceptive when one system retrieves far more material than another. BoR helps normalize for that.

5. Preventing overconfidence in benchmark results

- Some benchmarks may simply be too easy at the chosen retrieval depth. A strong-looking result may be closer to luck than we think.

Where I think BoR may be insufficient on its own

I like the paper, but I would not treat BoR as the final answer to retrieval evaluation. There are at least a few important caveats.

First, not every task only needs one good item. Some tasks genuinely require synthesis across multiple pieces of evidence. In those cases, a success-style view can understate the need for broader retrieval.

Second, retrieval usefulness is not binary. Two chunks may both count as “relevant,” while one is far more actionable, concise, or decision-useful for the model.

Third, prompt organization still matters. A noisy bundle that is carefully structured may perform better than a slightly cleaner bundle that is poorly ordered or badly formatted.

Fourth, the model itself matters. Different LLMs have different tolerance for clutter, different long-context behavior, and different tool-use reliability. A retrieval policy that pollutes one model may be acceptable for another.

Fifth, and this is especially relevant for large tool libraries, BoR tells us more about selectivity than about usability. A shortlist can still look meaningfully better than random and yet be too crowded, too overlapping, or too semantically messy for the model to use well.

So I would not use BoR in isolation. I would pair it with:

- downstream task accuracy,

- latency and token-cost analysis,

- tool-call quality,

- parameter correctness,

- and some explicit measure of prompt cleanliness or redundancy.

Still, even with those caveats, BoR contributes something important: it forces us to stop confusing coverage with selectivity.

How this changes evaluation practice for me

The biggest practical shift is that I would now evaluate retrieval systems more like this:

- First, look at standard retrieval metrics. They still matter. You should ideally consider a bag-of-metrics approach, leveraging multiple complementary metrics.

Then ask:

- What is the random baseline at this depth?

- Is higher Success@K actually demonstrating skill, or just easier conditions?

- How much extra context did we add to get that gain?

- Did downstream answer quality improve, stay flat, or worsen?

- Are we making the model reason, or merely making it read more?

For agents, I would go even further:

- How many tools were visible at decision time?

- How much overlap existed between candidate tools?

- Could the system have routed first and selected second?

- Was the model asked to choose from a clean shortlist, or from a crowded menu?

That is a more realistic evaluation setup for the kinds of systems many teams are actually deploying.

The broader lesson

The main lesson I took from the ICLR poster is much broader than a single new metric: it’s that LLM system quality depends heavily on the cleanliness of the context we construct around the model. That has consequences across the Agentic stack:

- retrieval,

- memory,

- tool routing,

- agent planning,

- multi-step workflows,

- and even UI design for human-in-the-loop systems.

The best LLM systems will be the ones that expose the right information, at the right moment, in the smallest clean bundle that still supports the task. This is the nature of what good context engineering looks like.

Final thought

For years, retrieval was mostly about finding needles in haystacks. For LLM systems, that is no longer enough. Now the job is also to avoid dragging half the haystack into the prompt along with the needle.

That is why I think the BoR idea matters and is so impactful. It gives practitioners a better language for a real production problem: how to measure when useful context has quietly turned into polluted context. And once you start looking at your systems that way, a lot of familiar agent failures begin to make much more sense.

BoR does not directly measure what the model “feels” when reading a prompt, but it does tell us when retrieval is ceasing to be meaningfully selective and starting to resemble brute-force context stuffing. In practice, that is often exactly the regime where LLMs begin to read more junk, reason less cleanly, and perform worse downstream.

More broadly, I think this points to an important emerging sub-field: developing better metrics for measuring LLM system performance in realistic settings, not just model capability in isolation. We have become reasonably good at measuring accuracy, recall, and benchmark performance, but much less good at measuring what happens when a model is forced to reason through cluttered, overlapping, or weakly filtered context.

That, to me, exposes a real gap. BoR helps measure selectivity relative to chance, which is valuable. But there is still a missing concept around what I would term cognitive overload: the point at which a model may still have the right information somewhere in view, yet performs worse because too many competing options, snippets, tools, or cues are presented at once. In other words, the failure is no longer just retrieval failure. It is a reasoning failure induced by prompt pollution.

I suspect that better ways of measuring this kind of cognitive overload will become increasingly important as agentic systems grow more complex. The next leap forward may not just come from larger models or bigger context windows, but from better ways of quantifying when the model’s working context has crossed the line from useful breadth into harmful overload.

Inspired by the ICLR 2026 blogpost/article, The 99% Success Paradox: When Near-Perfect Retrieval Equals Random Selection.

Disclaimer: The views and opinions expressed in this article are solely my own and do not represent those of my employer or any affiliated organisations. The content is based on personal reflections and speculative thinking about the future of science and technology. It should not be interpreted as professional, academic, or investment advice. These forward-looking perspectives are intended to spark discussion and imagination, not to make predictions with certainty.