that linear models can be… well, stiff. Have you ever looked at a scatter plot and realized a straight line just isn’t going to cut it? We’ve all been there.

Real-world data is always challenging. Most of the time, it feels like the exception is the rule. The data you get in your job is nothing like those beautiful linear datasets that we used during years of training in the academy.

For example, you’re looking at something like “Energy Demand vs. Temperature.” It’s not a line; it’s a curve. Usually, our first instinct is to reach for Polynomial Regression. But that’s a trap!

If you’ve ever seen a model curve go wild at the edges of your graph, you’ve witnessed the “Runge Phenomenon.” High-degree polynomials are like a toddler with a crayon, since they’re too flexible and have no discipline.

That’s why I am going to show you this option called Splines. They’re a neat solution: more flexible than a line, but far more disciplined than a polynomial.

Splines are mathematical functions defined by polynomials, and used to smooth a curve.

Instead of trying to fit one complex equation to your entire dataset, you break the data into segments at points called knots. Each segment gets its own simple polynomial, and they’re all stitched together so smoothly you can’t even see the seams.

The Problem with Polynomials

Imagine we have a non-linear trend, and we apply a polynomial x² or x³ to it. It looks okay locally, but then we look at the edges of your data, and the curve is going way off. According to Runge’s Phenomenon [2], high-degree polynomials have this problem where one weird data point at one end can pull the entire curve out of whack at the other end.

Example of low versus high-degree polynomials. Image by the author.

Why Splines are the “Just Right” Choice

Splines don’t try to fit one giant equation to everything. Instead, they divide your data into segments using points called knots. We have some advantages of using knots.

- Local Control: What happens in one segment stays in that segment. Because these chunks are local, a weird data point at one end of your graph won’t ruin the fit at the other end.

- Smoothness: They use “B-splines” (Basis splines) to ensure that where segments meet, the curve is perfectly smooth.

- Stability: Unlike polynomials, they don’t go wild at the boundaries.

Ok. Enough talk, now let’s implement this solution.

Implementing it with Scikit-Learn

Scikit-Learn’s SplineTransformer is the go-to choice for this. It turns a single numeric feature into multiple basis features that a simple linear model can then use to learn complex, non-linear shapes.

Let’s import some modules.

import numpy as np

import matplotlib.pyplot as plt

from sklearn.preprocessing import SplineTransformer

from sklearn.linear_model import Ridge

from sklearn.pipeline import make_pipeline

from sklearn.model_selection import GridSearchCV

Next, we create some curved oscillating data.

# 1. Create some ‘wiggly’ synthetic data (e.g., seasonal sales)

rng = np.random.RandomState(42)

X = np.sort(rng.rand(100, 1) * 10, axis=0)

y = np.sin(X).ravel() + rng.normal(0, 0.1, X.shape[0])

# Plot the data

plt.figure(figsize=(12, 5))

plt.scatter(X, y, color=’gray’, alpha=0.5, label=’Data’)

plt.legend()

plt.title(“Data”)

plt.show()

Plot of the data generated. Image by the author.

Ok. Now we will create a pipeline that runs the SplineTranformer with the default settings, followed by a Ridge Regression.

# 2. Build a pipeline: Splines + Linear Model

# n_knots=5 (default) creates 4 segments; degree=3 makes it a cubic spline

model = make_pipeline(

SplineTransformer(n_knots=5, degree=3),

Ridge(alpha=0.1)

)

Next, we will tune the number of knots for our model. We use GridSearchCV to run multiple versions of the model, testing different knot counts until it finds the one that performs best on our data.

# We tune ‘n_knots’ to find the best tune

param_grid = {‘splinetransformer__n_knots’: range(3, 12)}

grid = GridSearchCV(model, param_grid, cv=5)

grid.fit(X, y)

print(f”Best knot count: {grid.best_params_[‘splinetransformer__n_knots’]}”)

Best knot count: 8

Then, we retrain our spline model with the best knot count, predict, and plot the data. Also, let us understand what we are doing here with this quick breakdown of the SplineTransformer class arguments:

- n_knots: number of joints in the curve. The more you have, the more flexible the curve gets.

- degree: This defines the “smoothness” of the segments. It refers to the degree of the polynomial used between knots (1 is a line; 2 is smoother; 3 is the default).

- knots: This one tells the model where to place the joints. For example, uniform separates the curve into equal spaces, while quantile allocates more knots where the data is denser.

- Tip: Use ‘quantile’ if your data is clustered.

- extrapolation: Tells the model what it should do when it encounters data outside the range it saw during training.

- Tip: use ‘periodic’ for cyclic data, such as calendar or clock.

- include_bias: Whether to include a “bias” column (a column of all ones). If you are using a LinearRegression or Ridge model later in your pipeline, those models usually have their own fit_intercept=True, so you can often set this to False to avoid redundancy.

# 2. Build the optimized Spline

model = make_pipeline(

SplineTransformer(n_knots=8,

degree=3,

knots= ‘uniform’,

extrapolation=’constant’,

include_bias=False),

Ridge(alpha=0.1)

).fit(X, y)

# 3. Predict and Visualize

y_plot = model.predict(X)

# Plot

plt.figure(figsize=(12, 5))

plt.scatter(X, y, color=’gray’, alpha=0.5, label=’Data’)

plt.plot(X, y_plot, color=’teal’, linewidth=3, label=’Spline Model’)

plt.plot(X, y_plot_10, color=’purple’, linewidth=2, label=’Polynomial Fit (Degree 20)’)

plt.legend()

plt.title(“Splines: Flexible yet Disciplined”)

plt.show()

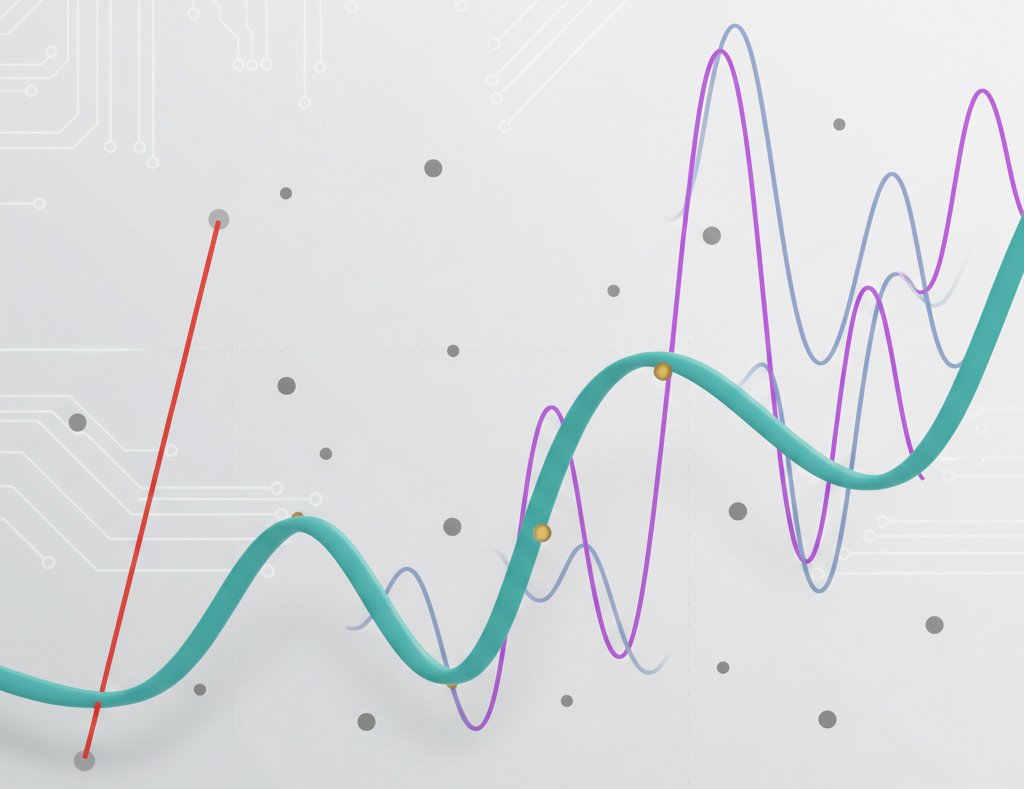

Here is the result. With splines, we have better control and a smoother model, escaping the problem at the ends.

Comparison of a high-degree polynomial (degree=20) vs. splines. Image by the author.

We are comparing a polynomial model of degree=20 with the spline model. One can argue that lower degrees can do a much better modeling of this data, and they would be correct. I have tested up to the 13th degree, and it fits well with this dataset.

However, that is exactly the point of this article. When the model is not fitting too well to the data, and we need to keep increasing the polynomial degree, we certainly will fall into the wild edges problem.

Real-Life Applications

Where would you actually use this in business?

- Time-Series Cycles: Use extrapolation=’periodic’ for features like “hour of day” or “month of year.” It ensures the model knows that 11:59 PM is right next to 12:01 AM. With this argument, we tell the SplineTransformer that the end of our cycle (hour 23) should wrap around and meet the beginning (hour 0). Thus, the spline ensures that the slope and value at the end of the day perfectly match the start of the next day.

- Dose-Response in Medicine: Modeling how a drug affects a patient. Most drugs follow a non-linear curve where the benefit eventually levels off (saturation) or, worse, turns into toxicity. Splines are the “gold standard” here because they can map these complex biological shifts without forcing the data into a rigid shape.

- Income vs. Experience: Salary often grows quickly early on and then plateaus; splines capture this “bend” perfectly.

Before You Go

We’ve covered a lot here, from why polynomials can be a “wild” choice to how periodic splines solve the midnight gap. Here’s a quick wrap-up to keep in your back pocket:

- The Golden Rule: Use Splines when a straight line is too simple, but a high-degree polynomial starts oscillating and overfitting.

- Knots are Key: Knots are the “joints” of your model. Finding the right number via GridSearchCV is the difference between a smooth curve and a jagged mess.

- Periodic Power: For any feature that cycles (hours, days, months), use extrapolation=’periodic’. It ensures the model understands that the end of the cycle flows perfectly back into the beginning.

- Feature Engineering > Complex Models: Often, a simple Ridge regression combined with SplineTransformer will outperform a complex “Black Box” model while remaining much easier to explain to your boss.

If you liked this content, find more about my work and my contacts on my website.

https://gustavorsantos.me

GitHub Repository

Here is the complete code of this exercise, and a couple of extras.

https://github.com/gurezende/Studying/blob/master/Python/sklearn/SplineTransformer.ipynb

References

[1. SplineTransformer Documentation] https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.SplineTransformer.html

[2. Runge’s Phenomenon] https://en.wikipedia.org/wiki/Runge%27s_phenomenon

[3. Make Pipeline Docs] https://scikit-learn.org/stable/modules/generated/sklearn.pipeline.make_pipeline.html