A new drama has taken the book publishing world by storm: The upcoming U.S. release of the horror book Shy Girl was canceled by publisher Hachette Book Group just weeks ahead of its release due to suspicion of AI use in its making.

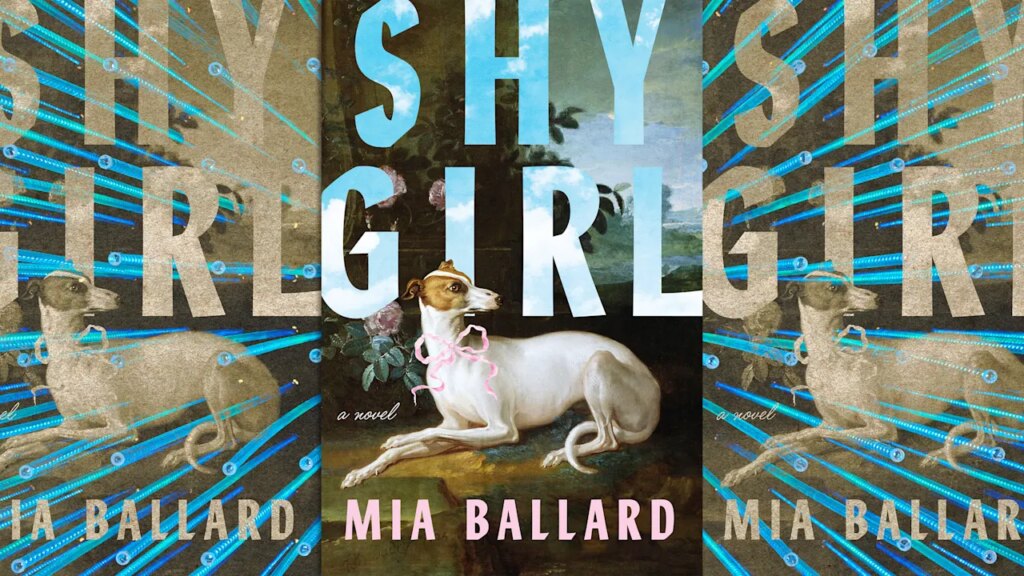

Authored by U.S. poet and fiction writer Mia Ballard, Shy Girl is a novel described as focusing on the life of a girl with severe obsessive–compulsive disorder (OCD) who agrees to be held captive as an affluent man’s pet in order to rid herself from financial woes.

The book was first self-published early last year, with another version released in November by Hachette’s U.K. imprint Wildfire. Hachette confirmed the cancellation to the New York Times, which first reported on the story.

While the self-published version of the book initially received positive reviews, its recent version has drawn speculation online regarding the novel’s writing.

In a Reddit thread from two months ago, one user dissected the common syntax of large language models (LLMs) and compared it with the book’s prose.

“It seems so obvious to me, but let me know if you agree,” the user said in a discussion that now includes hundreds of comments. “If it isn’t AI, she’s a terrible writer. Her writing is truly indistinguishable from an LLM.”

In January this year, users on the novel’s GoodReads page also pointed out their speculation.

“As an editor, I’ve read a few specifically ChatGPT written books, and this has not only all the hallmarks, but some specific repeated phrases that I’ve read in other ChatGPT works,” one commenter wrote.

But despite online speculation, the book appeared to be on course to head over to America, until this week.

Why was the book canceled?

According to the Times, Hachette pulled the book from the shelves and its release on Thursday, a day after the newspaper approached the publisher with what it described as evidence that the novel was AI generated.

Fast Company has reached out to Ballard for comment via email. The author’s Instagram account appeared to be deactivated as of Friday.

According to the Times, Ballard has since admitted that an acquaintance who edited the self-published version of the novel might have used AI.

“This controversy has changed my life in many ways and my mental health is at an all time low and my name is ruined for something I didn’t even personally do,” Ballard told The New York Times over email.

Who detects the detectors?

The controversy underscores many questions that have gripped the book publishing industry in the era of ChatGPT, including how one might spot the use of AI in the first place.

AI detectors essentially make a prediction on the likelihood that AI was used based on specific markers.

“Unlike plagiarism detectors that check for copied content, or spam filters that look for malicious patterns, AI detectors focus on the subtle stylistic fingerprints left behind by machine-writing processes,” Adobe explains.

Studies have shown that AI detectors can often spot AI use, yet no detector has yet achieved 100% reliability. In fact, in school settings, where these detectors are often used, high error rates have led to false accusations of AI use.

Even initiatives spearheaded by the AI companies themselves have been unsuccessful, with OpenAI shutting down its AI detection tool as far back as 2023.

Readers, meanwhile, continue to debate the issue. For instance, the use of em dashes in writing has in the past been associated with LLMs like ChatGPT. But defenders of the punctuation mark say it’s not always a reliable sign.

“This is not a great indicator in published creative writing—we love em dashes,” one Redditor said in a Shy Girl-related thread. “When might it raise an eyebrow? When it is consistently used to separate two quite simple clauses, and not so often used parenthetically.”