rewrite of the same system prompt.

You MUST return ONLY valid JSON. No markdown. No code fences. No explanation. JUST the JSON object.

I had written MUST in all-caps. To a language model. As if emphasis would work on something that doesn’t have feelings or, apparently, a consistent definition of “valid JSON.”

It didn’t work. Here’s what did.

How GPT-4 Ended Up in a Nightly Batch Job

Our team consumes research documents, such as PDFs and plain text, and occasionally those pesky semi-structured reports that some vendor clearly exported from a spreadsheet they were very proud of. And part of that pipeline classifies them and extracts structured fields before anything touches the data warehouse. Methodology type, dataset source, key metrics.

This sounds like a solved problem. It usually is, until there are about forty types of methods listed and the documents stop looking anything like the ones you trained on.

For a while, we handled this using regex, rule-based extractors, and a fine-tuned BERT model. The good thing is, it worked, but maintaining it felt like fixing a CSS file from 2015, where you touch one rule and something unrelated breaks on a page you haven’t visited in months.

So when GPT-4 came along, we tried it.

Not going to lie, it was kind of incredible. Edge cases that were driving BERT crazy for months, formats we’d never seen before, and documents with inconsistent sections were all taken care of cleanly by GPT-4.

The team demo went well. I mean, someone even said “wow” out loud.

I sent my manager a text that evening: “think we’ve cracked the extraction problem.” Sent with confidence.

Two weeks after we deployed it, the failures started.

The Problem With “Mostly Consistent”

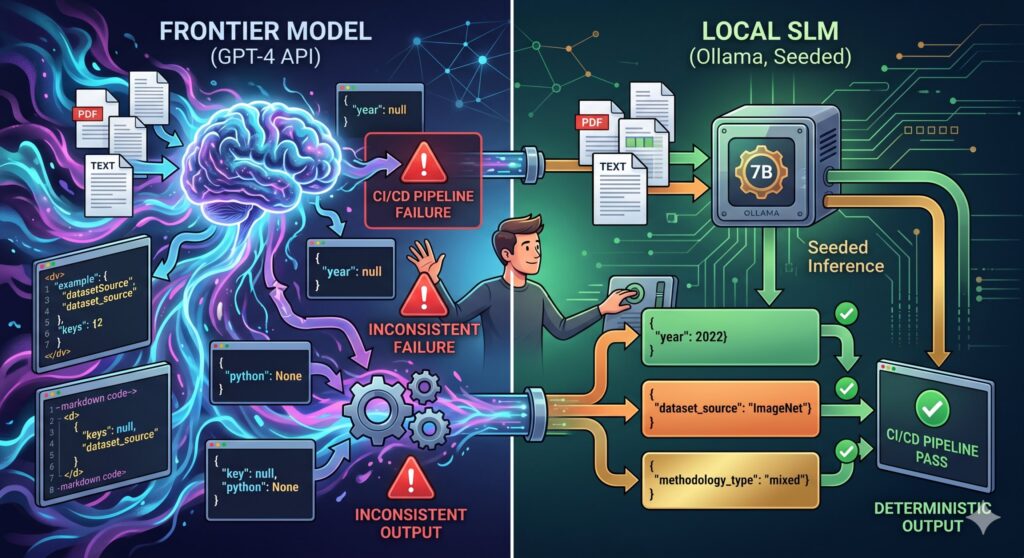

From my experience, GPT-4 is capable and non-deterministic.

For a lot of use cases, the non-determinism doesn’t matter. For a nightly batch pipeline feeding a data warehouse, it matters a lot.

At temperature=0 you get mostly consistent outputs. In a CI/CD context, “mostly” means “it’ll break on a Friday.”

The failures weren’t dramatic either; if not, that would’ve been easier to debug. GPT-4 was not hallucinating fields or returning garbage.

It was doing subtle things. “dataset_source” one night, “datasetSource” the next, “source_dataset” the night after. Markdown code fences around the JSON even though we’d told it not to, across every version of the prompt. Numbers returned as strings. JSON null returned as the Python string “None”; I spent longer than I’d like to admit staring at that one.

Each failure followed the same ritual.

Pydantic catches it downstream, pipeline fails, I confirm it’s another formatting quirk, then I get to work immediately to re-run manually. It passes, then about three days later, a different quirk all over again.

So I started keeping a log. Six weeks of it:

- 23 pipeline failures from GPT-4 output inconsistency

- ~18 minutes average to diagnose and re-run

- 0 actual bugs in the pipeline code

Zero real bugs. Every single failure was the model being subtly different from what it had been the night before. That’s the number that made me stop defending the setup.

What I Tried Before Admitting the Real Problem

Prompting

Seven rewrites over two days.

I didn’t even realize it had gotten that much. I just wanted a good fix. And it didn’t even stop there.

I tried all-caps instructions, few-shot examples, and counter-examples with a “Do NOT do this” header. I even tried adding a reminder at the very end of the user message as a last-ditch nudge, as if the model would get to that line and think oh right, JSON only, nearly forgot.

At one point, the same instruction appeared in three different places in the same prompt, and I genuinely thought that might help. Failures continued, unchanged.

A cleanup parser

When prompting failed, I wrote code to clean up any mess that GPT-4 threw back at me.

Strip markdown fences, find the JSON object if it was buried in text, and log the raw output when nothing worked.

This method actually worked for about a week, which was enough time for me to feel good about it.

And then GPT-4 began returning structurally valid JSON with wrong key names, camelCase instead of snake_case. The parser passed it through fine, and the error surfaced three steps later.

I was playing whack-a-mole with a model that had infinite moles.

response_format + temperature=0. OpenAI’s response_format={“type”: “json_object”} combined with temperature=0 brought failures from 23 down to about 9. Meaningful progress. Still not zero, and “nine random failures per six weeks” is not a pipeline property I could defend.

Function calling

This was the one that almost worked. Forcing output through a typed schema contract genuinely tightened things.

I stopped checking logs every morning, stopped bracing for the Slack notification. Told someone on the team it was stable. Which, if you work in software, you know is the fastest way to jinx something.

functions = [

{

“name”: “extract_document_metadata”,

“parameters”: {

“type”: “object”,

“properties”: {

“methodology_type”: {

“type”: “string”,

“enum”: [“experimental”, “observational”, “review”, “simulation”, “mixed”]

},

“dataset_source”: {“type”: “string”},

“primary_metric”: {“type”: “string”},

“year”: {“type”: “integer”},

“confidence_score”: {“type”: “number”, “minimum”: 0, “maximum”: 1}

},

“required”: [“methodology_type”, “dataset_source”, “year”]

}

}

]

response = openai_client.chat.completions.create(

model=”gpt-4-turbo”,

messages=messages,

functions=functions,

function_call={“name”: “extract_document_metadata”},

temperature=0

)

Then one Tuesday, the OpenAI API had a 20-minute disruption. Pipeline failed hard; it wasn’t a model issue, just a network dependency we’d never properly reckoned with.

We couldn’t version-lock the model, couldn’t run offline, couldn’t answer “what changed between the run that worked and the run that didn’t?” because the model on the other end wasn’t ours.

Sitting there waiting for someone else’s API to recover, I finally asked the question I should have asked months earlier: Does this specific job actually need a frontier model?

The Local Models Are Better Than I Expected

I went in fully expecting to spend a day confirming they weren’t good enough. I had a conclusion ready before I’d run a single test: tried it, quality wasn’t there, staying on GPT-4 with better retry logic. A story where the first decision was still defensible.

It took maybe three hours to realize I’d been thinking about this wrong.

Document extraction into a fixed schema isn’t actually a hard task for a language model. Not in the way that makes a frontier model necessary. There’s no reasoning involved, no synthesis, no need for the breadth of world knowledge that makes GPT-4 worth what it costs.

What it actually is: structured reading comprehension. The model reads a document and fills in fields. A well-trained 7B model does this fine. And with the right setup, specifically, seeded inference, it does it identically every single run.

I ran four models against 50 documents I’d manually annotated:

- Phi-3-mini (3.8B): Better instruction following than I expected for a 3.8B model, but it fell apart on anything over about 3,000 tokens. I nearly picked this before I looked at the longer doc results.

- Mistral 7B Instruct: Solid everywhere, no real surprises in either direction. The Toyota Camry of local models. You’d be fine with this.

- Qwen2.5-7B-Instruct: This one won clearly. Best structured output consistency of the four by a margin wide enough that it wasn’t a close call.

- Llama 3.2 3B Instruct: This is fast, but the extraction quality dropped off enough on edge cases that I wouldn’t run it on production data without a lot more validation work first.

Qwen2.5 and Mistral both hit 90–95% accuracy on the annotated set, lower than GPT-4 on genuinely ambiguous documents, yes.

But I ran Qwen2.5 on the same 20 documents three times and went back to diff the results. No diffs. Same JSON, same values, same field order every run.

After six weeks of failures I couldn’t predict, that felt almost too clean to be real.

Before and After

The GPT-4 extractor at its most stable, not the original prototype, the version after months of defensive code had accumulated around it:

# extractor_gpt4.py

import os

import json

from openai import OpenAI

client = OpenAI(api_key=os.environ[“OPENAI_API_KEY”])

SYSTEM_PROMPT = “””You are a research document metadata extractor.

Given the text of a research document, extract the specified metadata fields.

Be precise. If you are unsure about a field, use your best judgment based on context.”””

EXTRACTION_SCHEMA = {

“name”: “extract_document_metadata”,

“parameters”: {

“type”: “object”,

“properties”: {

“methodology_type”: {

“type”: “string”,

“enum”: [“experimental”, “observational”, “review”, “simulation”, “mixed”]

},

“dataset_source”: {“type”: “string”},

“primary_metric”: {“type”: “string”},

“year”: {“type”: “integer”},

“confidence_score”: {“type”: “number”}

},

“required”: [“methodology_type”, “dataset_source”, “year”]

}

}

def extract_metadata_gpt4(document_text: str) -> dict:

response = client.chat.completions.create(

model=”gpt-4-turbo”,

messages=[

{“role”: “system”, “content”: SYSTEM_PROMPT},

{“role”: “user”, “content”: f”Extract metadata from this document:\n\n{document_text[:8000]}”}

],

functions=[EXTRACTION_SCHEMA],

function_call={“name”: “extract_document_metadata”},

temperature=0,

timeout=30

)

try:

args = response.choices[0].message.function_call.arguments

return json.loads(args)

except (AttributeError, json.JSONDecodeError) as e:

raise ValueError(f”Failed to parse GPT-4 response: {e}”)

Latency: 3.5–5.8s per document. Cost: ~$0.04 per call. Failure rate: ~6% after all the fixes.

For the replacement, I chose Ollama. A colleague mentioned it offhandedly, and the docs looked reasonable at 11 pm, which is honestly how a lot of infrastructure decisions get made. The REST API is close enough to OpenAI’s that the swap took about an hour:

# extractor_slm.py

import json

import logging

import os

import re

import requests

logger = logging.getLogger(__name__)

OLLAMA_URL = os.getenv(“OLLAMA_URL”, “http://localhost:11434”)

MODEL = os.getenv(“MODEL_NAME”, “qwen2.5:7b-instruct-q4_K_M”)

# Tested empirically: 7B q4 on a T4 takes ~8s on cold first token,

# then ~1.5s per doc chunk. 45s covers bad days.

_TIMEOUT = 45

_SYSTEM_PROMPT = “””\

You are a metadata extractor for research documents.

Return ONLY a JSON object — no explanation, no markdown, no surrounding text.

Fields to extract:

– methodology_type (required): one of experimental | observational | review | simulation | mixed

– dataset_source (required): where the data came from

– year (required): integer

– primary_metric: main eval metric if present

– confidence_score: your confidence 0.0–1.0

Output example:

{“methodology_type”: “experimental”, “dataset_source”: “ImageNet”, “year”: 2022, “primary_metric”: “top-1 accuracy”, “confidence_score”: 0.95}

“””

def call_ollama(doc_text: str) -> dict:

payload = {

“model”: MODEL,

“messages”: [

{“role”: “system”, “content”: _SYSTEM_PROMPT},

{“role”: “user”, “content”: doc_text[:6000]},

],

“stream”: False,

“options”: {

“temperature”: 0,

“seed”: 42, # determinism — this is the whole point

},

}

try:

resp = requests.post(f”{OLLAMA_URL}/api/chat”, json=payload, timeout=_TIMEOUT)

resp.raise_for_status()

except requests.exceptions.Timeout:

raise RuntimeError(f”Ollama timed out after {_TIMEOUT}s — is the model loaded?”)

except requests.exceptions.ConnectionError:

raise RuntimeError(f”Can’t reach Ollama at {OLLAMA_URL} — is the container running?”)

raw = resp.json()[“message”][“content”].strip()

cleaned = re.sub(r”^“`(?:json)?\s*|\s*“`$”, “”, raw).strip()

try:

return json.loads(cleaned)

except json.JSONDecodeError as e:

logger.error(“Failed to parse model output: %s\nRaw was: %s”, e, raw[:400])

raise

The seed: 42 in options is what actually delivers the determinism. Ollama supports seeded generation; same input, same seed, same output, every time, not approximately. temperature=0 with a hosted API implied this but never guaranteed it because you’re not controlling the runtime. Locally, you are.

Getting It Into GitHub Actions

Two things that aren’t obvious until they bite you. First: GitHub Actions service containers are network-isolated from the runner. You can’t docker exec into them; the model pull has to go through the REST API. Second: cache the model. A cold pull is 4.7GB and adds 3–4 minutes to every job.

# The non-obvious parts — the rest is standard Actions boilerplate

– name: Cache Ollama model

uses: actions/cache@v4

with:

path: ~/.ollama/models

key: ollama-qwen2.5-7b-q4

– name: Pull SLM model

# Can’t docker exec into service containers — use the API

run: |

curl -s http://localhost:11434/api/pull \

-d ‘{“name”: “qwen2.5:7b-instruct-q4_K_M”}’ \

–max-time 300

– name: Warm up model

run: |

curl -s http://localhost:11434/api/generate \

-d ‘{“model”: “qwen2.5:7b-instruct-q4_K_M”, “prompt”: “hello”, “stream”: false}’ \

> /dev/null

– name: Run ingestion pipeline

run: python pipeline/run_ingestion.py

env:

OLLAMA_URL: “http://localhost:11434”

MODEL_NAME: “qwen2.5:7b-instruct-q4_K_M”

The Capitalization Bug I Spent Too Long On

Qwen 2.5 occasionally returns “Experimental” with a capital E despite explicit instructions not to. The Literal type check rejects it, and you get a validation failure on a document the model actually extracted correctly.

I spent an embarrassingly long time on this because the error message just said “invalid value,” and my first instinct was to look at the document, then the extractor, before I finally looked at the validator and saw it.

A normalize_methodology validator with mode=”before” fixes it before the type check runs:

# pipeline/validation.py

from typing import Literal, Optional

from pydantic import BaseModel, Field, field_validator

class DocMetadata(BaseModel):

methodology_type: Literal[“experimental”, “observational”, “review”, “simulation”, “mixed”]

dataset_source: str = Field(min_length=3)

year: int = Field(ge=1950, le=2030)

primary_metric: Optional[str] = None

confidence_score: Optional[float] = Field(default=None, ge=0.0, le=1.0)

@field_validator(“dataset_source”)

@classmethod

def strip_whitespace(cls, v: str) -> str:

return v.strip()

@field_validator(“methodology_type”, mode=”before”)

@classmethod

def normalize_methodology(cls, v: str) -> str:

# Qwen occasionally returns “Experimental” despite instructions

return v.lower().strip() if isinstance(v, str) else v

I want to be straight about the tradeoffs because I hate articles that don’t.

GPT-4 is genuinely better on ambiguous documents, non-English text, anything requiring real inference. There’s a class of document in our corpus, older PDFs, reports mixing multiple languages, non-standard formats, where the SLM stumbles and GPT-4 wouldn’t.

We flag those separately and route them to a review queue. That’s not seamless, but it’s honest. The SLM does the 90% it’s good at and doesn’t get asked to do the rest.

The setup is also more work than pip install openai. First-time configuration takes a real afternoon. And Ollama doesn’t auto-update models, so version management is my problem now. I’ve genuinely made peace with that, knowing exactly what model version ran last night and the night before is the whole point.

What I Think Now

The morning the pipeline first ran clean, no notification, no re-run, just a log file showing 312 documents processed in 8.4 minutes, I checked it twice, then ran it manually again.

I’d spent six weeks half-expecting an alert before I’d even finished my coffee. Watching it pass quietly felt genuinely strange.

The failure wasn’t using GPT-4. The failure was treating a probabilistic system like a deterministic function. temperature=0 reduces variance. It doesn’t eliminate it.

I understood this in theory the whole time. It took 23 failures to understand it in a way that changed how I build things.

If you’re running an LLM in an automated pipeline and things keep breaking in ways you can’t reproduce, it might not be your code or your prompts. It might be the nature of what you’re depending on.

A local model on hardware you control, seeded for determinism, is a genuinely different class of tool for this kind of job. Not better at everything but better at being the same thing twice. And for a pipeline that runs unattended every night, that’s most of what actually matters.

Before you go!

If this was useful, I write more about the messy reality behind building with AI, what breaks in production, what actually works, and the tradeoffs behind the fixes.

You can subscribe to my newsletter if you’d like more of that.