a photograph of a kitchen in milliseconds. It can segment every object in a street scene, generate photorealistic images of rooms that don’t exist, and write convincing descriptions of places it’s never been.

But ask it to walk into an actual room and tell you which object sits on which shelf, how far the table is from the wall, or where the ceiling ends and the window begins in physical space —

and the illusion breaks.

The models that dominate computer vision benchmarks operate in flatland. They reason about pixels on a 2D grid.

They have no native understanding of the 3D world those pixels depict.

🦚 Florent’s Note: This gap between pixel-level intelligence and spatial understanding isn’t a minor inconvenience. It’s the single largest bottleneck standing between current AI systems and the physical-world applications that matter most: robots that navigate warehouses, autonomous vehicles that plan around obstacles, and digital twins that accurately mirror real buildings.

In this article, I break down the three AI layers that are converging right now to make spatial understanding possible from ordinary photographs.

I show how geometric fusion (the layer nobody talks about) turns noisy per-image predictions into coherent 3D scene labels, and I share real numbers from production pipelines: a 3.5x label amplification factor that turns 20% coverage into 78%.

If you work with 3D data, point clouds, or foundation models, this is the piece of the puzzle you’ve been missing.

The spatial AI pipeline: a single photograph becomes a depth-aware, semantically labeled 3D scene through three converging AI layers. (c) F. Poux

The 3D annotation bottleneck that nobody talks about

Reconstructing 3D geometry from photographs is, at this point, a solved problem.

Structure-from-Motion pipelines have been matching keypoints and triangulating 3D positions for over two decades. And the arrival of monocular depth estimation models like Depth-Anything-3 means you can now generate dense 3D point clouds from a single smartphone video without any specialized hardware.

The geometry is there. What’s missing is meaning.

A point cloud with 800,000 points and no labels is a beautiful visualization that can’t answer a single practical question. You can’t ask it “show me only the walls” or “measure the surface area of the floor” or “select everything within two meters of the electrical panel.”

Those queries require every point to carry a semantic label, and producing those labels at scale remains brutally expensive.

🦥 Geeky Note: The traditional approach relies on LiDAR scanners and teams of annotators who manually click through millions of points in specialized software. A single indoor floor of a commercial building can take a trained operator eight to twelve hours. Multiply that by an entire campus or a fleet of vehicles scanning streets, and the economics collapse.

Trained 3D segmentation networks like PointNet++ and MinkowskiNet can automate the process, but they need labeled training data (the same data that’s expensive to produce), and they tend to be domain-specific. A model trained on office interiors will fail on construction sites.

The zero-shot foundation models that have transformed 2D computer vision (SAM, Grounded SAM, SEEM) operate exclusively on images. They produce 2D masks, not 3D labels.

So the field sits in an awkward position where both the geometric reconstruction and the semantic prediction are individually strong, but nobody has a clean, general-purpose way to connect them.

The question isn’t whether AI can understand 3D space. It’s how you bridge the predictions that work in 2D into the geometry that lives in 3D.

The evolution from manual 3D annotation toward fully automatic spatial understanding, with geometric fusion as the constant bridge between dimensions. How to get there? (c) F. Poux

So what would it look like if you could actually stack these capabilities into one pipeline?

All images and animations are made by my own little fingers, to better clarify and illustrate the impact of Spatial AI. (c) F. Poux .

Three layers of spatial AI are converging right now into a single 3D labeling stack

Something interesting happened between 2023 and 2025. Three independent research threads matured to the point where they can be stacked into a single pipeline. And the combination is more powerful than any of them alone.

The layers of Spatial AI. (c) F. Poux

Layer 1: metric depth estimation from a single photograph

Models like Depth-Anything and its successors (DA-V2, DA-3) take a single photograph and predict a per-pixel depth map.

Example of a Depth Map from an AI-generated image. (c) F. Poux

The key breakthrough isn’t depth prediction itself (that has existed since the early deep learning era). It’s the shift from relative depth to metric depth.

Relative depth tells you that the table is closer than the wall, which is useful for image editing but useless for 3D reconstruction. Metric depth tells you the table is 1.3 meters away and the wall is 4.1 meters away, which means you can place those surfaces at their correct positions in a coordinate system.

Depth-Anything-3 produces metric depth at roughly 30 frames per second on a consumer GPU. That makes it practical for real-time applications.

Layer 2: foundation segmentation from a text prompt

The Segment Anything Model and its descendants (SAM 2, Grounded SAM, FastSAM) can partition any image into coherent regions from a single click, a bounding box, or a text prompt.

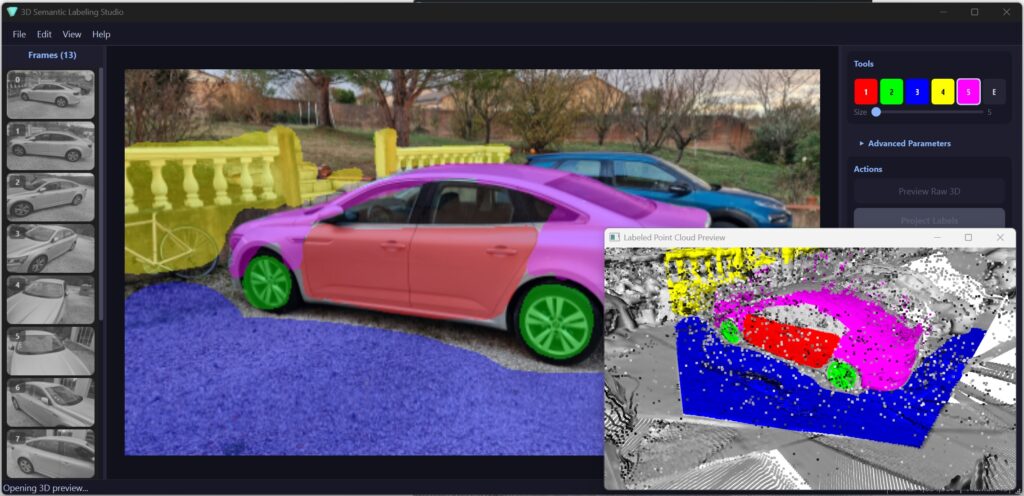

The results of a foundation model on 3D data. (c) F. Poux

These models are class-agnostic in the most useful sense: they don’t need to have seen your specific object category during training. You can point at an industrial valve, a surgical instrument, or a children’s toy, and SAM will produce a pixel-accurate mask.

🌱 Growing Note: When combined with a text-grounding module, the system goes from “segment whatever I click” to “segment everything that looks like a pipe” across thousands of images without human interaction. That’s where the manual painting step in today’s pipelines gets automated tomorrow.

Layer 3: geometric fusion (the engineering nobody gives you for free)

Here’s the thing. The third layer is where the real engineering challenge lives: geometric fusion.

Camera intrinsics and extrinsics provide the mathematical bridge between 2D image coordinates and 3D world coordinates. If you know the focal length of the camera, the position and orientation from which each photo was taken, and the depth at every pixel, you can project any 2D prediction into its exact 3D location.

Having the position of the images relative to the objects accurately is the key for a coherent geometric fusion. (c) F. Poux

The back-projection itself is five lines of linear algebra:

# Pinhole back-projection: ixel (u,v) with depth d to 3D point

x_cam = (u – cx) * depth / fx y_cam = (v – cy) * depth / fy

z_cam = depth point_world = (np.stack([x_cam, y_cam, z_cam]) – t) @ R

Layers one and two are commoditized. You download a pretrained model, run inference, and get depth maps or masks that are good enough for production use.

Layer three is the part nobody gives you for free.

That’s because it requires understanding camera models, handling noisy depth, resolving conflicts between viewpoints, and propagating sparse predictions into dense coverage. It’s the connective tissue that turns per-image AI predictions into coherent 3D understanding, and getting it right is what separates a research demo from a working system.

🪐 System Thinking Note: The three-layer stack is a concrete instance of a general pattern in AI systems: perception layers (depth, segmentation) commoditize rapidly through foundation models, while integration layers (geometric fusion, temporal consistency) remain engineering-intensive. The competitive advantage shifts from having better models to having better integration.

The spatial AI stack in practice: depth estimation, semantic segmentation, and geometric fusion combine to produce labeled 3D scenes from ordinary photographs. (c) F. Poux

The math for projection is clean. But what happens when the depth is wrong, the cameras disagree, and you need labels on 800,000 points from just five images?

How geometric reasoning turns 2D pixels into labeled 3D places

The central operation in the spatial AI stack is what I call dimensionality bridging: you perform a task in the dimension where it’s easiest, then transfer the result to the dimension where it’s needed.

Dimensionality bridge from 2D to 3D models. (c) F. Poux

Honestly, this is the most underrated concept in the whole pipeline.

Humans and AI models are fast and accurate at labeling 2D images.

Labeling 3D point clouds is slow, expensive, and error-prone. So you label in 2D and project into 3D, using the camera as your bridge.

🦚 Florent’s Note: I’ve implemented this projection operation in at least a dozen production pipelines, and the math never changes. What changes is how you handle the noise. Every camera, every depth model, every scene type introduces different failure modes. The projection is algebra. The noise handling is engineering judgment.

a labeled pixel with known depth transforms through the camera model into a 3D world coordinate, carrying its semantic label along. (c) F. Poux

Depth maps from monocular estimation aren’t ground truth. They contain errors at object boundaries, in reflective surfaces, and in textureless regions. A single back-projected mask will place some labels in the wrong 3D location. And when you combine masks from multiple viewpoints, different cameras will disagree about what label belongs at a given point.

This is where the fusion algorithm earns its keep.

The four-stage fusion pipeline for 3D label propagation

The fusion pipeline I’ve been refining across several projects follows four stages, each addressing a specific failure mode.

The function signature captures the design philosophy:

def smart_label_fusion( points_3d, # Full scene point cloud (N, 3)

labels_3d, # Sparse labels from multi-view projection

camera_positions, # Where each camera was in world space

max_distance=0.15, # Ball query radius for label propagation

max_camera_dist=5.0, # Noise gate: ignore points far from cameras

min_neighbors=3, # Quorum for democratic voting batch_size=50000 #

Memory-bounded processing chunks )

This materializes in the following:

The four-stage fusion pipeline: distance filtering removes noise, spatial indexing enables fast queries, target identification finds gaps, and democratic voting fills them. (c) F. Poux

Stage 1: noise gate. Points that sit far from any camera position are likely reconstruction artifacts, and any labels they carry are unreliable. By computing the minimum distance from each point to the nearest camera and stripping labels beyond a threshold, you remove the long-range errors that would otherwise corrupt downstream voting.

Stage 2: spatial index. Rather than indexing all 800,000 points, the algorithm constructs a KD-tree using only the labeled subset. This reduces the tree size by 80% or more, making every subsequent query faster.

Stage 3: target identification. Every point still carrying a zero label after the noise gate becomes a propagation candidate. In a typical five-view session, roughly 20% of the scene receives direct labels. That means 80% of points are waiting for the voting step.

Stage 4: democratic vote. For each unlabeled point, a ball query collects all labeled neighbors within radius max_distance. If fewer than min_neighbors labeled points fall within range, the point remains unlabeled (abstention prevents low-confidence guesses). Otherwise, the most common label wins.

🦥 Geeky Note: The min_neighbors parameter is the quorum threshold. Setting it to 1 would let a single noisy label propagate unchecked. Setting it to 3 means at least three independent labeled points must agree before a vote counts. In practice, values between 3 and 5 produce the best balance between coverage and accuracy, because depth noise rarely places three erroneous labels in the same local neighborhood.

Why does this work so well? Because errors from monocular depth tend to be spatially random while correct labels cluster together. Majority voting naturally filters the noise.

🌱 Growing Note: The three parameters to tune: max_distance=0.05 (propagation radius, 5 cm for dense indoor objects, 0.15 for sparse outdoor). min_neighbors=3 (minimum votes, increase to 5-10 for noisy data). batch_size=100000 (safe for 16 GB RAM, drop to 50000 under memory pressure). These three numbers determine the quality-speed-memory tradeoff for your specific scene.

The entire process runs in under ten seconds on 800,000 points with a consumer CPU. No GPU, no model inference, no training. Pure computational geometry.

And that’s precisely why it generalizes across every domain I’ve tested it on: indoor scenes, outdoor objects, industrial parts, archaeological artifacts.

Four stages, ten seconds, zero deep learning. But does the output actually hold up when you look at the numbers?

From 20% to 78% label coverage: what 3D geometric fusion actually produces

When you project semantic predictions from five out of fifteen photographs into 3D, roughly 20% of the point cloud receives a direct label. The coverage is patchy because each camera sees only a portion of the scene.

Before fusion (left): sparse colored patches on ~20% of points. After fusion (right): dense coverage reaching ~78% through geometric label propagation. (c) F. Poux

The result looks like colored islands in a seaof gray.

After the fusion pipeline runs, coverage jumps to approximately 78%. That 3.5x expansion comes entirely from the geometric reasoning in the ball-query voting step.

Let me be specific about what that means:

- No additional human input is required

- No model inference happens

- No new information enters the system

- The algorithm simply propagates existing labels to nearby unlabeled points using spatial proximity and democratic consensus

The points that remain unlabeled fall into two informative categories. Some sit in regions that no camera observed well (occluded areas, tight crevices, the underside of overhanging geometry).

Others sit at class boundaries where the ball query found neighbors from multiple classes but none reached the quorum threshold, so the algorithm correctly abstained rather than guessing.

Both failure modes tell you exactly where to add another viewpoint to close the gaps.

The geometric fusion layer acts as a label amplifier. Any upstream prediction, whether it comes from a human, from SAM, or from a future text-prompted model, gets amplified by the same factor.

This is the insight that makes the whole stack work.

If SAM replaces the manual painting step, the pipeline becomes fully automatic: foundation model predictions in 2D, geometric amplification in 3D, no human in the loop. The fusion layer doesn’t care where the initial labels came from. It only cares that they’re spatially consistent enough for the voting step to produce reliable results.

The label amplification strategy. (c) F. Poux

🌱 Growing Note: I ran this same pipeline on an industrial pipe rack with 4.2 million points and 32 camera positions. The fusion step took 47 seconds and expanded coverage from 12% to 61%. The lower final coverage reflects the geometric complexity (many occluded surfaces), but the amplification factor (5x) was actually higher than the simpler scene. Denser camera networks push the ceiling further.

A 3.5x amplifier that works with any input source is powerful. But there’s one problem the fusion layer can’t solve on its own.

The open problem in spatial AI: multi-view consistency and where 3D labeling is heading

Foundation models produce predictions independently for each image. SAM doesn’t know what it segmented in the previous frame. Depth-Anything-3 doesn’t enforce consistency across viewpoints.

When you project these per-image predictions into 3D, they sometimes disagree.

One camera might label a region as “wall” while another labels overlapping points as “ceiling,” not because either prediction is wrong in 2D, but because the class boundary looks different from different angles.

The fusion layer partially resolves these disagreements through majority voting. If seven cameras call a point “wall” and two call it “ceiling,” the point gets labeled “wall,” and that’s usually correct.

But at genuine class boundaries (where the wall meets the ceiling), the voting becomes a coin flip.

🦥 Geeky Note: I’ve seen boundary artifacts spanning 5 to 15 centimeters in indoor scenes, which is acceptable for most applications but problematic for precision tasks like as-built BIM modeling. For progress monitoring, facility management, or spatial analytics, these boundaries are irrelevant. For millimeter-precision construction documentation, they matter.

Actually, let me rephrase that. The boundary artifacts aren’t the real problem. The real problem is that nobody’s closed the loop between 3D consensus and 2D prediction.

The next frontier is multi-view consistency: making the upstream models aware of each other’s predictions before they reach the fusion layer. SAM 2 takes a step in this direction by propagating masks across video frames, but it operates in 2D and doesn’t enforce 3D geometric consistency. A system that feeds the 3D fusion results back into the 2D prediction loop (correcting per-image masks based on the emerging 3D consensus) would close the loop entirely.

🦚 Florent’s Note: I’m already seeing this convergence play out in real projects. A client recently brought me a pipeline where they ran SAM on 200 drone images of a construction site, projected the masks through DA3 depth, and used a version of this fusion algorithm to label a 12-million-point cloud. The annotation step that used to take two full days finished in eleven minutes. The boundary artifacts were there, but for progress monitoring they didn’t matter. They needed “which floor is poured” and “where are the rebar cages,” not millimeter-precision edges. That’s spatial AI right now: it works, it’s fast, and the remaining imperfections are irrelevant for 80% of real use cases.

What I expect to unfold in the next 12 to 18 months

Here’s my timeline, based on what I’m seeing across research labs and the industry projects I advise:

TimeframeMilestoneImpactQ2 2026On-device depth estimation accurate enough for spatial AI (already shipping on recent iPhones and Pixels)Capture becomes a simple video recording, no cloud inference neededQ3 2026SAM 3 or equivalent ships with native multi-view awarenessBoundary artifacts shrink by an order of magnitude

Mid 2026Q4 2026Real-time 3D semantic streaming: walk through a building, labeled point cloud builds itselfThe geometric fusion layer from this article is exactly what makes that pipeline work

The bottleneck shifts from producing labels to quality-controlling them, which is a much better problem to have.

🪐 System Thinking Note: The techniques I use today for validating fusion output (per-class statistics, before/after coverage metrics, boundary inspection) become the diagnostic layer that sits on top of the fully automated stack. If you understand the fusion pipeline now, you’ll be the person who debugs and improves it when it runs at scale. That’s where the real leverage is.

fully labeled 3D scene produced by fusing foundation model predictions through camera geometry: the output that spatial AI is converging toward. (c) F. Poux

🌱 Growing Note: If you want to build the complete pipeline yourself (the manual version that teaches you every component), I’ve published a step-by-step tutorial covering the full Python implementation with interactive painting, back-projection, and fusion. The free toolkit includes all the code and a sample dataset.

Resources for going deeper into spatial AI and 3D data science

If you want to go deeper into the spatial AI stack, here are the references that matter.

The 3D Geodata Academy that I created is an educative platform that offers an open-access course on 3D point cloud processing with Python that covers the geometric foundations (coordinate systems, camera models, spatial indexing) in detail. My O’Reilly book, 3D Data Science with Python, provides a comprehensive treatment of the algorithms discussed here, including KD-tree construction, ball queries, and label propagation strategies.

For the individual layers of the stack:

Florent Poux, Ph.D.

Scientific and Course Director at the 3D Geodata Academy. I research and teach 3D spatial data processing, point cloud analysis, and the intersection of geometric computing with machine learning. You can access my open courses at learngeodata.eu and find my book 3D Data Science with Python on O’Reilly.

Frequently asked questions about spatial AI and 3D semantic understanding

What is the difference between 2D image segmentation and 3D spatial understanding?

Image segmentation assigns labels to pixels in a flat photograph, while 3D semantic understanding assigns labels to points in a volumetric coordinate system where distances, surfaces, and spatial relationships are preserved. The gap between them is the camera geometry that maps pixels to physical locations, and bridging that gap is what the spatial AI stack described in this article accomplishes.

Can foundation models like SAM directly produce 3D labels from photographs?

Not yet. SAM and similar models operate on individual 2D images and have no native understanding of 3D geometry. Their predictions must be projected into 3D space using camera intrinsics, extrinsics, and depth information from models like Depth-Anything-3, then fused across multiple viewpoints using spatial algorithms like KD-tree ball queries with majority voting.

How does geometric label fusion scale to large 3D point clouds?

The fusion algorithm scales linearly with point count through batched processing that keeps peak memory bounded. On a scene with 800,000 points, the full pipeline runs in under ten seconds on a consumer CPU. On a 4.2-million-point industrial scene, it completes in under a minute. The KD-tree spatial index reduces neighbor queries from brute-force O(N) to O(log N) per point.

What is the 3.5x label amplification factor in geometric fusion?

When you project semantic labels from five camera viewpoints into 3D, roughly 20% of the point cloud receives direct labels. The KD-tree ball-query fusion propagates these sparse labels to nearby unlabeled points through majority voting, expanding coverage to approximately 78%. The 3.5x ratio (78/20) represents how much label coverage the geometric fusion adds with zero additional input.

Where can I learn more about 3D data science and the spatial AI stack?

The 3D Geodata Academy offers hands-on courses covering point clouds, meshes, voxels, and Gaussian splats. For a comprehensive reference, 3D Data Science with Python on O’Reilly covers 18 chapters from fundamentals to production systems, including all the geometric fusion techniques discussed here.