and asked if I could help extract revision numbers from over 4,700 engineering drawing PDFs. They were migrating to a new asset-management system and needed every drawing’s current REV value, a small field buried in the title block of each document. The alternative was a team of engineers opening each PDF one by one, locating the title block, and manually keying the value into a spreadsheet. At two minutes per drawing, that is roughly 160 person-hours. Four weeks of an engineer’s time. At fully loaded rates of roughly £50 per hour, that is over £8,000 in labour costs for a task that produces no engineering value beyond populating a spreadsheet column.

This was not an AI problem. It was a systems design problem with real constraints: budget, accuracy requirements, mixed file formats, and a team that needed results they could trust. The AI was one component of the solution. The engineering decisions around it were what actually made the system work.

The Hidden Complexity of “Simple” PDFs

Engineering drawings are not ordinary PDFs. Some were created in CAD software and exported as text-based PDFs where you can programmatically extract text. Others, particularly legacy drawings from the 1990s and early 2000s, were scanned from paper originals and saved as image-based PDFs. The entire page is a flat raster image with no text layer at all.

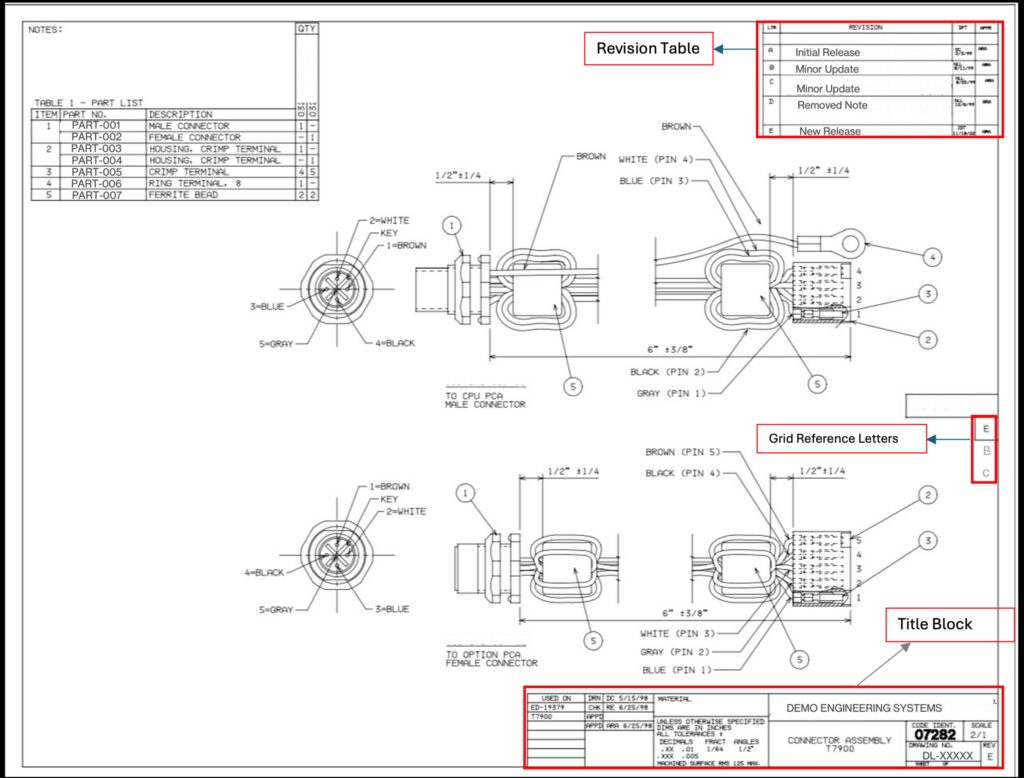

📷 [FIGURE 1: Annotated engineering drawing] A representative engineering drawing with the title block (bottom-right), revision history table (top-right), and grid reference letters (border) highlighted. The REV value “E” sits in the title block next to the drawing number, but the revision history table and grid letters are common sources of false positives.

Our corpus was roughly 70-80% text-based and 20-30% image-based. But even the text-based subset was treacherous. REV values appeared in at least four formats: hyphenated numeric versions like 1-0, 2-0, or 5-1; single letters like A, B, C; double letters like AA or AB; and occasionally empty or missing fields. Some drawings were rotated 90 or 270 degrees. Many had revision history tables (multi-row change logs) sitting right alongside the current REV field, which is an obvious false positive trap. Grid reference letters along the drawing border could easily be mistaken for single-letter revisions.

Why a Fully AI Approach Was the Wrong Choice

You could throw every document at GPT-4 Vision and call it a day, but at roughly $0.01 per image and 10 seconds per call, that is $47 and nearly 100 minutes of API time. More importantly, you’d be paying for expensive inference on documents where a few lines of Python could extract the answer in milliseconds.

The logic was simple: if a document has extractable text and the REV value follows predictable patterns, there is no reason to involve an LLM. Save the model for the cases where deterministic methods fail.

The Hybrid Architecture That Worked

📷 [FIGURE 2: Pipeline architecture diagram] Two-stage hybrid pipeline: every PDF enters Stage 1 (PyMuPDF rule-based extraction). If a confident result is returned, it goes straight to the output CSV. If not, the PDF falls through to Stage 2 (GPT-4 Vision via Azure OpenAI).

Stage 1: PyMuPDF extraction (deterministic, zero cost). For every PDF, we attempt rule-based extraction using PyMuPDF. The logic focuses on the bottom-right quadrant of the page, where title blocks live, and searches for text near known anchors like “REV”, “DWG NO”, “SHEET”, and “SCALE”. A scoring function ranks candidates by proximity to these anchors and conformance to known REV formats.

def extract_native_pymupdf(pdf_path: Path) -> Optional[RevResult]:

“””Try native PyMuPDF text extraction with spatial filtering.”””

try:

best = process_pdf_native(

pdf_path,

brx=DEFAULT_BR_X, # bottom-right X threshold

bry=DEFAULT_BR_Y, # bottom-right Y threshold

blocklist=DEFAULT_REV_2L_BLOCKLIST,

edge_margin=DEFAULT_EDGE_MARGIN

)

if best and best.value:

value = _normalize_output_value(best.value)

return RevResult(

file=pdf_path.name,

value=value,

engine=f”pymupdf_{best.engine}”,

confidence=”high” if best.score > 100 else “medium”,

notes=best.context_snippet

)

return None

except Exception:

return None

The blocklist filters out common false positives: section markers, grid references, page indicators. Constraining the search to the title block region cut false matches to near zero.

Stage 2: GPT-4 Vision (for everything Stage 1 misses). When native extraction comes back empty, either because the PDF is image-based or the text layout is too ambiguous, we render the first page as a PNG and send it to GPT-4 Vision via Azure OpenAI.

def pdf_to_base64_image(self, pdf_path: Path, page_idx: int = 0,

dpi: int = 150) -> Tuple[str, int, bool]:

“””Convert PDF page to base64 PNG with smart rotation handling.”””

rotation, should_correct = detect_and_validate_rotation(pdf_path)

with fitz.open(pdf_path) as doc:

page = doc[page_idx]

pix = page.get_pixmap(matrix=fitz.Matrix(dpi/72, dpi/72), alpha=False)

if rotation != 0 and should_correct:

img_bytes = correct_rotation(pix, rotation)

return base64.b64encode(img_bytes).decode(), rotation, True

else:

return base64.b64encode(pix.tobytes(“png”)).decode(), rotation, False

We settled on 150 DPI after testing. Higher resolutions bloated the payload and slowed API calls without improving accuracy. Lower resolutions lost detail on marginal scans.

What Broke in Production

Two classes of problems only showed up when we ran across the full 4,700-document corpus.

Rotation ambiguity. Engineering drawings are frequently stored in landscape orientation, but the PDF metadata encoding that orientation varies wildly. Some files set /Rotate correctly. Others physically rotate the content but leave the metadata at zero. We solved this with a heuristic: if PyMuPDF can extract more than ten text blocks from the uncorrected page, the orientation is probably fine regardless of what the metadata says. Otherwise, we apply the correction before sending to GPT-4 Vision.

Prompt hallucination. The model would sometimes latch onto values from the prompt’s own examples instead of reading the actual drawing. If every example showed REV “2-0”, the model developed a bias toward outputting “2-0” even when the drawing clearly showed “A” or “3-0”. We fixed this in two ways: we diversified the examples across all valid formats with explicit anti-memorization warnings, and we added clear instructions distinguishing the revision history table (multi-row change log) from the current REV field (single value in the title block).

CRITICAL RULES – AVOID THESE:

✗ DO NOT extract from REVISION HISTORY TABLES

(columns: REV | DESCRIPTION | DATE)

– We want the CURRENT REV from title block (single value)

✗ DO NOT extract grid reference letters (A, B, C along edges)

✗ DO NOT extract section markers (“SECTION C-C”, “SECTION B-B”)

Results and Trade-offs

We validated against a 400-file sample with manually verified ground truth.

MetricHybrid (PyMuPDF + GPT-4)GPT-4 OnlyAccuracy (n=400)96%98%Processing time (n=4,730)~45 minutes~100 minutesAPI cost~$10-15~$47 (all files)Human review rate~5%~1%

The 2% accuracy gap was the price of a 55-minute runtime reduction and bounded costs. For a data migration where engineers would spot-check a percentage of values anyway, 96% with a 5% flagged-for-review rate was acceptable. If the use case had been regulatory compliance, we would have run GPT-4 on every file.

We later benchmarked newer models, including GPT-5+, against the same 400-file validation set. Accuracy was comparable to GPT-4.1 at 98%. The newer models offered no meaningful lift for this extraction task, at higher cost per call and slower inference. We shipped GPT-4.1. When the task is spatially constrained pattern matching in a well-defined document region, the ceiling is the prompt and the preprocessing, not the model’s reasoning capability.

In production work, the “right” accuracy target isn’t always the highest one you can hit. It’s the one that balances cost, latency, and the downstream workflow that depends on your output.

From Script to System

The initial deliverable was a command-line tool: feed it a folder of PDFs, get a CSV of results. It ran within our Microsoft Azure environment, using Azure OpenAI endpoints for the GPT-4 Vision calls.

After the initial migration succeeded, stakeholders asked if other teams could use it. We wrapped the pipeline in a lightweight internal web application with a file upload interface, so non-technical users could run extractions on demand without touching a terminal. The system has since been adopted by engineering teams at multiple sites across the organisation, each running their own drawing archives through it for migration and audit tasks. I can’t share screenshots for confidentiality reasons, but the core extraction logic is identical to what I’ve described here.

Lessons for Practitioners

Start with the cheapest viable method. The instinct when working with LLMs is to use them for everything. Resist it. Deterministic extraction handled 70-80% of our corpus at zero cost. The LLM only added value because we kept it focused on the cases where rules fell short.

Validate at scale, not on cherry-picked samples. The rotation ambiguity, the revision history table confusion, the grid reference false positives. None of these appeared in our initial 20-file test set. Your validation set needs to represent the actual distribution of edge cases you’ll see in production.

Prompt engineering is software engineering. The system prompt went through multiple iterations with structured examples, explicit negative cases, and a self-verification checklist. Treating it as throwaway text instead of a carefully versioned component is how you end up with unpredictable outputs.

Measure what matters to the stakeholder. Engineers didn’t care whether the pipeline used PyMuPDF, GPT-4, or carrier pigeons. They cared that 4,700 drawings were processed in 45 minutes instead of four weeks, at $50-70 in API calls instead of £8,000+ in engineering time, and that the results were accurate enough to proceed with confidence.

The full pipeline is roughly 600 lines of Python. It saved four weeks of engineering time, cost less than a team lunch in API fees, and has since been deployed as a production tool across multiple sites. We tested the latest models. They weren’t better for this job. Sometimes the highest-impact AI work isn’t about using the most powerful model available. It’s about knowing where a model belongs in the system, and keeping it there.

Obinna is a Senior AI/Data Engineer based in Leeds, UK, specialising in document intelligence and production AI systems. He creates content around practical AI engineering at @DataSenseiObi on X and Wisabi Analytics on YouTube.